|

In 2024, champions of surveillance reform in the House passed the Fourth Amendment Is Not For Sale Act – which would force government agencies to obtain probable cause warrants before collecting Americans’ most sensitive and personal data scraped from apps and sold by data brokers. House passage of this measure creates powerful momentum for this major surveillance reform, in the next Congress if not in this one. Congress also imposed strong reporting and accountability measures on the FBI. The Bureau must now report the number of times it searches, or “queries,” the communications of Americans in FISA Section 702 databases. This reform amendment also allows the leaders of both Houses of Congress and the House and Senate Judiciary and Intelligence Committees to attend hearings of the secret FISA Court – something Jim Jordan, Chairman of the Judiciary Committee, (R-OH) and Ranking Member Jerry Nadler, (D-NY), are publicly planning to do. Congress did reauthorize Section 702, the foreign intelligence surveillance authority, without requiring warrants to examine queries of the communications of Americans caught up in this global data trawl. Even here, however, there were bright spots. The advocates of the intelligence community avoided a warrant requirement for surveillance of Americans by the narrowest margin – the breaking of a tie vote. And champions of reform succeeded in moving the next reauthorization of Section 702 from five years to two years. As a result of this close vote and narrow window, debate is already well underway on ways to improve Section 702. On the negative side, House Intelligence Committee leaders managed to insert into Section 702 reauthorization a measure we called “Make Everyone a Spy” – now law – that requires many businesses with internet-related communications equipment to allow warrantless inspection of customer data. At this writing, efforts are underway to narrow this provision. Champions of Reform Throughout this year, many Members stepped forward to take a strong, bold stance for surveillance reform. These include:

Other prominent and diligent House surveillance reformers include:

In the Senate:

The Federal Government’s “Beneficial Ownership” Snoop Millions of small business owners are about to be hit with a nasty surprise. The Corporate Transparency Act, which passed Congress as part of the must-pass National Defense Authorization Act of 2021, goes into effect this year. Advertised as a way to combat money laundering, this new law now requires small businesses to report their “beneficial owners” to the U.S. Department of Treasury’s Financial Crimes Enforcement Network (FinCEN).

This reporting requirement falls on any small business with fewer than 20 employees to reveal its “beneficial owner.” In plain English, this means a small business must give the government the name of anyone who controls or has a 25 percent or greater interest in that business. By Jan. 1, 2025, small businesses must submit the full legal name, date of birth, current residential or business address, and a unique identifier from a government ID of all its beneficial owners. There are significant privacy risks at stake in this seemingly innocuous law, beginning with the widespread access multiple federal agencies will have to this new database. This law, which covers 32 million existing companies and will suck in an additional 5 million new companies every year, threatens anyone who makes a mistake or files an incomplete submission with up to $10,000 in fines and up to two years in prison. “The CTA will potentially make a felon out of any unsuspecting person who is simply trying to make a living in his or her own lawful business or who is trying to start one and makes a simple mistake for violations,” says the National Small Business Association (NSBA). The “beneficial ownership” provision is one more way for the federal government to break down the walls of financial privacy in its quest to comprehensively track Americans’ finances. Consider another big bill, the recent Infrastructure Investment and Jobs Act of 2021, which requires $10,000 or more in cryptocurrency transactions to be reported to the government within 15 days. Incorrect or missing information may result in a $25,000 fine or five years in prison. In addition, the CATO Institute reports that new regulations under consideration would hold financial advisors accountable to “elements of the Bank Secrecy Act, which currently compels banks to turn over certain financial data to the feds.” It is likely that your financial advisor will soon be required to snitch on you. This undermines the whole concept of a fiduciary, someone who is by law supposed to be loyal to your interests. All of these measures are justified by the quest to track the money networks of criminals, terrorists, and drug dealers. But the data these authorities generate will be available, without a warrant, to the IRS, the FBI, the ATF, the Department of Homeland Security, and just about any agency that wants to investigate you for your personal activities or statements that some official deems suspicious. The CTA’s “beneficial ownership” provision represents a new assertion by the federal government over small business. Since before the Constitution, the regulation of small business has been under the purview of the states. Now Washington is assembling a database with which it can heap new regulations on small business regardless of state policies. The NSBA, which is challenging this law in court, estimates that complexities in business ownership will require companies to spend an average of $8,000 a year to comply with this law. NSBA’s lawsuit is moving forward with a named plaintiff, Huntsville business owner Isaac Winkles, in a federal lawsuit. NSBA and Winkles won summary judgment from Judge Liles Burke of the U.S. District Court of the Northern District of Alabama, who held the beneficial owner requirement to be unconstitutional because it exceeds the enumerated powers of Congress. While the government appeals its case to the Eleventh Circuit, FinCEN maintains that it will only exclude small businesses from this requirement if they were members of NSBA on or before March 1. These encroachments are steady and their champions on the Hill are growing bolder in financial surveillance. The good news is that privacy activists have just acquired 32 million new allies. Why did the “unmasking” of Americans’ identities in the global data trawl of U.S. intelligence agencies increase by 172 percent, from 11,511 times in 2022 to 31,330 times in 2023?

Government officials briefing the media say that most of this increase was a defensive response to a hostile intelligence agency launching a massive cyberattack on U.S. infrastructure, possibly infiltrating the digital systems of dams, power plants, or the like. What we do know for sure is that this authority has been abused before. Unmasking occurs when American citizens or “U.S. persons” are caught up, incidentally, in warrantless foreign surveillance. When this happens, the identities of these Americans are routinely hidden from government agents, or “masked.” But senior officials can request that the NSA “unmask” those individuals. This should be a relatively rare occurrence. Yet for some reason, over a 12-month period between 2015 and 2016, the Obama Administration unmasked 9,217 persons. Former UN Ambassador Samantha Power, or someone acting in her name, was a prolific unmasker. Power’s name was used to request unmasking of Americans more than 260 times. Large-scale unmasking continued under the Trump administration, with 2018 seeing 16,721 unmaskings, an increase of 7,000 from the year before. In recent years, the number hovered around 10,000. Now it is three times that many. This is a concern if some subset of these unmaskings (which mostly involve an email account or IP address, not a name) were for named individuals for political purposes. Consider that in 2016, at least 16 Obama administration officials, including then-Vice President Joe Biden, requested unmaskings of Donald Trump’s advisors. Outgoing National Security Advisor Susan Rice took a particular interest in unmasking members of President-elect Trump’s transition team. We are left to wonder if all of this rise in unmasking numbers can be explained away by Chinese or Russian hackers, or if some portion of them reflect the use of this authority for political purposes. Were prominent politicians, officeholders, or candidates unmasked? These raw numbers come from the government’s Annual Statistical Transparency report. This report on intelligence community activities from the Office of the Director of National Intelligence offers revealing numbers, but often without detail or explanation that would explain such jumps. All we have to rely on are media briefs that at times seem more forthcoming than the briefings available to Members of Congress, even those tasked with oversight of intelligence agencies in the House and Senate Judiciary Committees. As these numbers rise, the American people deserve more information and a solid assurance that these authorities will never again be used for political purposes by either party. We needed a little perspective before reporting on the historic showdown on the reauthorization of FISA Section 702 that ended on April 19 with a late-night Senate vote. The bottom line: The surveillance reform coalition finally made it to the legislative equivalent of the Super Bowl. We won’t be taking home any Super Bowl rings, but we made a lot of yardage and racked up impressive touchdowns.

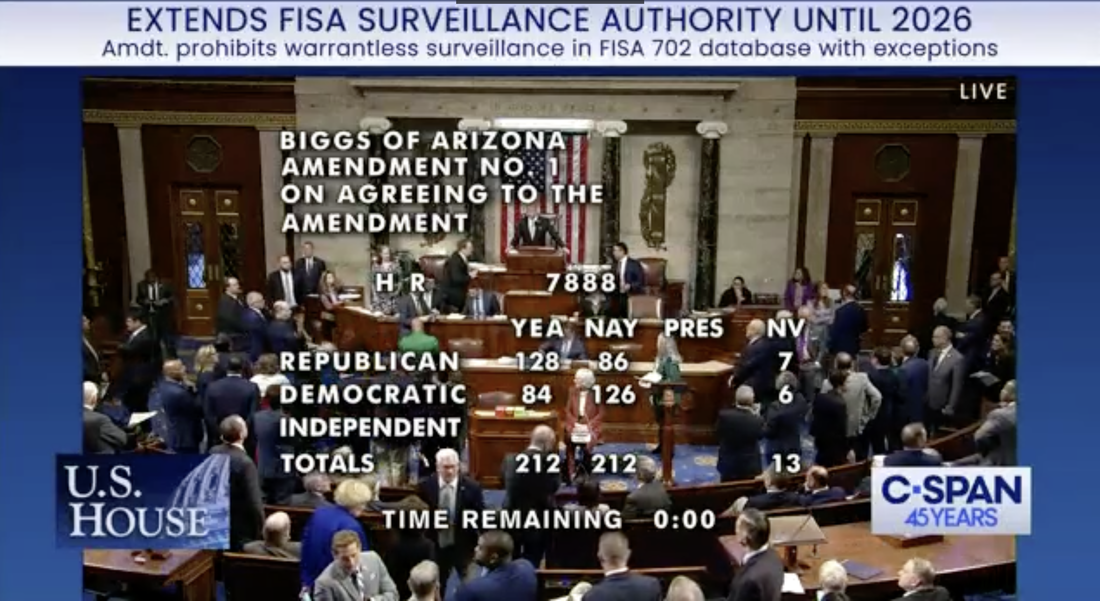

For years, PPSA has coordinated with a wide array of leading civil liberties organizations across the ideological spectrum toward that key moment. We worked hard and enjoyed the support of our followers in flooding Congress with calls and emails supporting privacy and surveillance reform. So what was the result? We failed to get a warrant requirement for Section 702 data but came within one vote of winning it in the House. There was a lot of good news and new reforms that should not be overlooked. And where the news was bad, there are silver linings that gleam.

We come out of this legislative fracas bloodied but energized. We put together a durable left-right coalition in which House Judiciary Committee Chairman Jim Jordan and Ranking Member Jerry Nadler, as well as the heads of the Freedom and Progressive caucuses, who worked side-by-side. For the first time, our surveillance coalition had the intelligence community and their champions on the run. We lost the warrant provision for Section 702 only by a tie vote. Had every House Member who supported our position been in attendance, we would have won. This bodes well for the next time Section 702 reauthorization comes up. We will be ready. Let’s not forget that a recent bipartisan YouGov poll shows that 80 percent of Americans support warrant requirements. We sense a gathering of momentum – and we look forward to preparing for the next big round in April 2026. The risks and benefits of reverse searches are revealed in the capital murder case of Aaron Rayshan Wells. Although a security camera recorded a number of armed men entering a home in Texas where a murder took place, the lower portions of the men’s faces were covered. Wells was identified in this murder investigation by a reverse search enabled by geofencing.

A lower court upheld the geofence in this case as sufficiently narrow. It was near the location of a homicide and was within a precise timeframe on the day of the crime, 2:45-3:10 a.m. But ACLU in a recent amicus brief identifies dangers with this reverse search, even within such strict limits. What are the principles at stake in this practice? Let’s start with the Fourth Amendment, which places hurdles government agents must clear before obtaining a warrant for a search – “no warrants shall issue, but upon probable cause, supported by Oath or affirmation, and particularly describing the place to be searched, and the persons or things to be seized.” The founders’ tight language was formed by experience. In colonial times, the King’s agents could act on a suspicion of smuggling by ransacking the homes of all the shippers in Boston. Forcing the government to name a place, and a person or thing to be seized and searched, was the founders’ neat solution to outlawing such general warrants altogether. It was an ingenious system, and it worked well until Michael Dimino came along. In 1995, this inventor received a patent for using GPS to locate cellphones. Within a few years, geofencing technology could instantly locate all the people with cellphones within a designated boundary at a specified time. This was a jackpot for law enforcement. If a bank robber was believed to have blended into a crowd, detectives could geofence that area and collect the phone numbers of everyone in that vicinity. Make a request to a telecom service provider, run computer checks on criminals with priors, and voilà, you have your suspect. Thus the technology-enabled practice of conducting a “reverse search” kicked into high gear. Multiple technologies assist in geofenced investigations. One is a “tower dump,” giving law enforcement access to records of all the devices connected to a specified cell tower during a period of time. Wi-Fi is also useful for geofencing. When people connect their smartphones to Wi-Fi networks, they leave an exact log of their physical movements. Our Wi-Fi data also record our online searches, which can detail our health, mental health, and financial issues, as well intimate relationships, and political and religious activities and beliefs. A new avenue for geofencing was created on Monday by President Biden when he signed into a law a new measure that will give the government the ability to tap into data centers. The government can now enlist the secret cooperation of the provider of “any” service with access to communications equipment. This gives the FBI, U.S. intelligence agencies, and potentially local law enforcement a wide, new field with which to conduct reverse searches based on location data. In these ways, modern technology imparts an instant, all-around understanding of hundreds of people in a targeted area, at a level of intimacy that Colonel John André could not have imagined. The only mystery is why criminals persist in carrying their phones with them when they commit crimes. Google was law enforcement’s ultimate go-to in geofencing. Warrants from magistrates authorizing geofence searches allowed the police to obtain personal location data from Google about large numbers of mobile-device users in a given area. Without any further judicial oversight, the breadth of the original warrant was routinely expanded or narrowed in private negotiations between the police and Google. In 2023, Google ended its storage of data that made geofencing possible. Google did this by shifting the storage of location data from its servers to users’ phones. For good measure, Google encrypted this data. But many avenues remain for a reverse search. On one hand, it is amazing that technology can so rapidly identify suspects and potentially solve a crime. On the other, technology also enables dragnet searches that pull in scores of innocent people, and potentially makes their personal lives an open book to investigators. ACLU writes: “As a category, reverse searches are ripe for abuse both because our movements, curiosity, reading, and viewing are central to our autonomy and because the process through which these searches are generally done is flawed … Merely being proximate to criminal activity could make a person the target of a law enforcement investigation – including an intrusive search of their private data – and bring a police officer knocking on their door.” Virginia judge Mary Hannah Lauck in 2022 recognized this danger when she ruled that a geofence in Richmond violated the Fourth Amendment rights of hundreds of people in their apartments, in a senior center, people driving by, and in nearby stores and restaurants. Judge Lauck wrote “it is difficult to overstate the breadth of this warrant” and that an “innocent individual would seemingly have no realistic method to assert his or her privacy rights tangled within the warrant. Geofence warrants thus present the marked potential to implicate a ‘right without a remedy.’” ACLU is correct that reverse searches are obvious violations of the plain meaning of the Fourth Amendment. If courts continue to uphold this practice, however, strict limits need to be placed on the kinds of information collected, especially from the many innocent bystanders routinely caught up in geofencing and reverse searches. And any change in the breadth of a warrant should be determined by a judge, not in a secret deal with a tech company. An amendment to require the FBI and other federal agencies to obtain a probable cause warrant before accessing Americans’ communications under FISA Section 702 fell one vote short in the U.S. House of Representatives on Friday.

This was a disappointment, made worse by an expansion of the government’s surveillance powers contained in the bill. The House vote includes a change in the definition of an electronic communication service provider to require a whole new range of businesses to assist the government in its spying. But there was also good news. Pressure from reformers did succeed in changing Section 702 reauthorization from five years to two years. The House also passed a measure from Rep. Chip Roy (R-TX) that requires the FBI to give Congress a quarterly report on the number of U.S. person queries conducted. The combination of a shorter period before the next reauthorization and the strengthened oversight of the FBI should serve notice on the FBI and other agencies not to return to their lax treatment of Americans’ privacy and constitutional rights. Reform received another win on Friday with the passage of an amendment sponsored by Rep. Ben Cline (R-VA) that makes permanent the suspended intelligence practice of “abouts” collection, in which Americans were targeted for merely being mentioned in a communications. Abuse of “abouts” collection prompted the FISA Court to publicly excoriate the National Security Agency for an “institutional lack of candor” about a “very serious Fourth Amendment issue.” PPSA joins our civil liberties peers in calling on the Senate to reject any reauthorization that continues Section 702 programs without a warrant requirement for Americans. A recent YouGov poll shows that almost 80 percent of Americans support the warrant requirement. The signals for reform are growing stronger – the American people and a growing coalition in Congress have had enough of Washington’s surveillance abuse. Forbes reports that federal authorities were granted a court order to require Google to hand over the names, addresses, phone numbers, and user activities of internet surfers who were among the more than 30,000 viewers of a post. The government also obtained access to the IP addresses of people who weren’t logged onto the targeted account but did view its video.

The post in question is suspected of being used to promote the sale of bitcoin for cash, which would be a violation of money-laundering rules. The government likely had good reason to investigate that post. But did it have to track everyone who came into contact with it? This is a prime example of the government’s street-sweeper shotgun approach to surveillance. We saw this when law enforcement in Virginia tracked the location histories of everyone in the vicinity of a robbery. A state judge later found that search meant that everyone in the area, from restaurant patrons to residents of a retirement home, had “effectively been tailed.” We saw the government shotgun approach when the FBI secured the records of everyone in the Washington, D.C., area who used their debit or credit cards to make Bank of America ATM withdrawals between Jan. 5 and Jan. 7, 2021. We also saw it when the FBI, searching for possible foreign influence in a congressional campaign, used FISA Section 702 data – meant to surveil foreign threats on foreign soil – to pull the data of 19,000 political donors. Surfing the web is not inherently suspicious. What we watch online is highly personal, potentially revealing all manner of social, romantic, political, and religious beliefs and activities. The Founders had such dragnet-style searches precisely in mind when they crafted the Fourth Amendment. Simply watching a publicly posted video is not by itself probable cause for search. It should not compromise one’s Fourth Amendment rights. Byron Tau – journalist and author of Means of Control, How the Hidden Alliance of Tech and Government Is Creating a New American Surveillance State – discusses the details of his investigative reporting with Liza Goitein, senior director of the Brennan Center for Justice's Liberty & National Security Program, and Gene Schaerr, general counsel of the Project for Privacy and Surveillance Accountability.

Byron explains what he has learned about the shadowy world of government surveillance, including how federal agencies purchase Americans’ most personal and sensitive information from shadowy data brokers. He then asks Liza and Gene about reform proposals now before Congress in the FISA Section 702 debate, and how they would rein in these practices. A federal court has given the go-ahead for a lawsuit filed by Just Futures Law and Edelson PC against Western Union for its involvement in a dragnet surveillance program called the Transaction Record Analysis Center (TRAC).

Since 2022, PPSA has followed revelations on a unit of the Department of Homeland Security that accesses bulk data on Americans’ money wire transfers above $500. TRAC is the central clearinghouse for this warrantless information, recording wire transfers sent or received in Arizona, California, New Mexico, Texas, and Mexico. These personal, financial transactions are then made available to more than 600 law enforcement agencies – almost 150 million records – all without a warrant. Much of what we know about TRAC was unearthed by a joint investigation between ACLU and Sen. Ron Wyden (D-OR). In 2023, Gene Schaerr, PPSA general counsel, said: “This purely illegal program treats the Fourth Amendment as a dish rag.” Now a federal judge in Northern California determined that the plaintiffs in Just Future’s case allege plausible violations of California laws protecting the privacy of sensitive financial records. This is the first time a court has weighed in on the lawfulness of the TRAC program. We eagerly await revelations and a spirited challenge to this secretive program. The TRAC intrusion into Americans’ personal finances is by no means the only way the government spies on the financial activities of millions of innocent Americans. In February, a House investigation revealed that the U.S. Treasury’s Financial Crimes Enforcement Network (FinCEN) has worked with some of the largest banks and private financial institutions to spy on citizens’ personal transactions. Law enforcement and private financial institutions shared customers’ confidential information through a web portal that connects the federal government to 650 companies that comprise two-thirds of the U.S. domestic product and 35 million employees. TRAC is justified by being ostensibly about the border and the activities of cartels, but it sweeps in the transactions of millions of Americans sending payments from one U.S. state to another. FinCEN set out to track the financial activities of political extremists, but it pulls in the personal information of millions of Americans who have done nothing remotely suspicious. Groups on the left tend to be more concerned about TRAC and groups on the right, led by House Judiciary Chairman Jim Jordan, are concerned about the mass extraction of personal bank account information. The great thing about civil liberties groups today is their ability to look beyond ideological silos and work together as a coalition to protect the rights of all. For that reason, PPSA looks forward to reporting and blasting out what is revealed about TRAC in this case in open court. Any revelations from this case should sink in across both sides of the aisle in Congress, informing the debate over America’s growing surveillance state. The reform coalition on Capitol Hill remains determined to add strong amendments to Section 702 of the Foreign Intelligence Surveillance Act (FISA). But will they get the chance before an April 19th deadline for FISA Section 702’s reauthorization?

There are several possible scenarios as this deadline closes. One of them might be a vote on the newly introduced “Reforming Intelligence and Securing America” (RISA) Act. This bill is a good-faith effort to represent the narrow band of changes that the pro-reform House Judiciary Committee and the status quo-minded House Permanent Select Committee on Intelligence could agree upon. But is it enough? RISA is deeply lacking because it leaves out two key reforms.

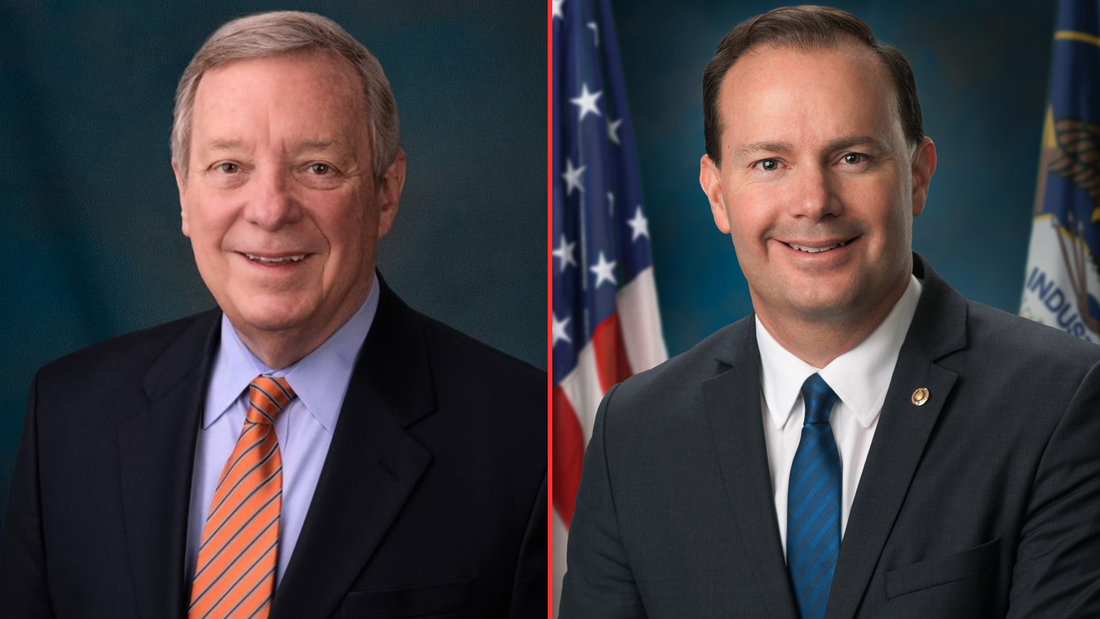

The bill does include a role for amici curiae, specialists in civil liberties who would act as advisors to the secret FISA court. RISA, however, would limit the issues these advisors could address, well short of the intent of the Senate when it voted 77-19 in 2020 to approve the robust amici provisions of the Lee-Leahy amendment. For all these reasons, reformers should see RISA as a floor, not as a ceiling, as the Section 702 showdown approaches. The best solution to the current impasse is to stop denying Members of Congress the opportunity for a straight up-or-down vote on reform amendments. The reauthorization of FISA Section 702, which allows federal agencies to conduct international surveillance for national security purposes, has languished in Congress like an old Spanish galleon caught in the doldrums. This happened after opponents of reform pulled Section 702 reauthorization from the House floor rather than risk losing votes on popular measures, such as requiring government agencies to obtain warrants before surveilling Americans’ communications.

But the winds are no longer becalmed. They are picking up – and coming from the direction of reform. Sen. Dick Durbin (D-IL), Chairman of the Senate Judiciary Committee, and fellow committee member Sen. Mike Lee (R-UT), today introduced the Security and Freedom Enhancement (SAFE) Act. This bill requires the government to obtain warrants or court orders before federal agencies can access Americans’ personal information, whether from Section 702-authorized programs or purchased from data brokers. Enacted by Congress to enable surveillance of foreign targets in foreign lands, Section 702 is used by the FBI and other federal agencies to justify domestic spying. According to the Foreign Intelligence Surveillance Act (FISA) Court, under Section 702 government “batch” searches have included a sitting U.S. Congressman, a U.S. Senator, journalists, political commentators, a state senator, and a state judge who reported civil right violations by a local police chief to the FBI. It has even been used by government agents to stalk online romantic prospects. Millions of Americans in recent years have had their communications compromised by programs under Section 702. The reforms of the SAFE Act promise to reverse this trend, protecting Americans’ privacy and constitutional rights from the government. The SAFE Act requires:

Durbin-Lee is a pragmatic bill. It lifts warrants and other requirements in emergency circumstances. The SAFE Act allows the government to obtain consent for surveillance if the subject of the search is a potential victim or target of a foreign plot. It allows queries designed to identify targets of cyberattacks, where the only content accessed and reviewed is malicious software or cybersecurity threat signatures. The SAFE Act is a good-faith effort to strike a balance between national security and Americans’ privacy. It should break the current stalemate, renewing the push for debate and votes on amendments to the reauthorization of Section 702. How to Tell if You are Being Tracked Car companies are collecting massive amounts of data about your driving – how fast you accelerate, how hard you brake, and any time you speed. These data are then analyzed by LexisNexis or another data broker to be parsed and sold to insurance companies. As a result, many drivers with clean records are surprised with sudden, large increases in their car insurance payments.

Kashmir Hill of The New York Times reports the case of a Seattle man whose insurance rates skyrocketed, only to discover that this was the result of LexisNexis compiling hundreds of pages on his driving habits. This is yet another feature of the dark side of the internet of things, the always-on, connected world we live in. For drivers, internet-enabled services like navigation, roadside assistance, and car apps are also 24-7 spies on our driving habits. We consent to this, Hill reports, “in fine print and murky privacy policies that few read.” One researcher at Mozilla told Hill that it is “impossible for consumers to try and understand” policies chocked full of legalese. The good news is that technology can make data gathering on our driving habits as transparent as we are to car and insurance companies. Hill advises:

What you cannot do, however, is file a report with the FBI, IRS, the Department of Homeland Security, or the Pentagon to see if government agencies are also purchasing your private driving data. Given that these federal agencies purchase nearly every electron of our personal data, scraped from apps and sold by data brokers, they may well have at their fingertips the ability to know what kind of driver you are. Unlike the private snoops, these federal agencies are also collecting your location histories, where you go, and by inference, who you meet for personal, religious, political, or other reasons. All this information about us can be accessed and reviewed at will by our government, no warrant needed. That is all the more reason to support the inclusion of the principles of the Fourth Amendment Is Not for Sale Act in the reauthorization of the FISA Section 702 surveillance policy. While Congress debates adding reforms to FISA Section 702 that would curtail the sale of Americans’ private, sensitive digital information to federal agencies, the Federal Trade Commission is already cracking down on companies that sell data, including their sales of “location data to government contractors for national security purposes.”

The FTC’s words follow serious action. In January, the FTC announced proposed settlements with two data aggregators, X-Mode Social and InMarket, for collecting consumers’ precise location data scraped from mobile apps. X-Mode, which can assimilate 10 billion location data points and link them to timestamps and unique persistent identifiers, was targeted by the FTC for selling location data to private government contractors without consumers’ consent. In February, the FTC announced a proposed settlement with Avast, a security software company, that sold “consumers’ granular and re-identifiable browsing information” embedded in Avast’s antivirus software and browsing extensions. What is the legal basis for the FTC’s action? The agency seems to be relying on Section 5 of the Federal Trade Commission Act, which grants the FTC power to investigate and prevent deceptive trade practices. In the case of X-Mode, the FTC’s proposed complaint highlight’s X-Mode’s statement that their location data would be used solely for “ad personalization and location-based analytics.” The FTC alleges X-Mode failed to inform consumers that X-Mode “also sold their location data to government contractors for national security purposes.” The FTC’s evolving doctrine seems even more expansive, weighing the stated purpose of data collection and handling against its actual use. In a recent blog, the FTC declares: “Helping people prepare their taxes does not mean tax preparation services can use a person’s information to advertise, sell, or promote products or services. Similarly, offering people a flashlight app does not mean app developers can collect, use, store, and share people’s precise geolocation information. The law and the FTC have long recognized that a need to handle a person’s information to provide them a requested product or service does not mean that companies are free to collect, keep, use, or share that’s person’s information for any other purpose – like marketing, profiling, or background screening.” What is at stake for consumers? “Browsing and location data paint an intimate picture of a person’s life, including their religious affiliations, health and medical conditions, financial status, and sexual orientation.” If these cases go to court, the tech industry will argue that consumers don’t sign away rights to their private information when they sign up for tax preparation – but we all do that routinely when we accept the terms and conditions of our apps and favorite social media platforms. The FTC’s logic points to the common understanding that our data is collected for the purpose of selling us an ad, not handing over our private information to the FBI, IRS, and other federal agencies. The FTC is edging into the arena of the Fourth Amendment Is Not for Sale Act, which targets government purchases and warrantless inspection of Americans’ personal data. The FTC’s complaints are, for the moment, based on legal theory untested by courts. If Congress attaches similar reforms to the reauthorization of FISA Section 702, it would be a clear and hard to reverse protection of Americans’ privacy and constitutional rights. Ken Blackwell, former ambassador and mayor of Cincinnati, has a conservative resume second to none. He is now a senior fellow of the Family Research Council and chairman of the Conservative Action Project, which organizes elected conservative leaders to act in unison on common goals. So when Blackwell writes an open letter in Breitbart to Speaker Mike Johnson warning him not to try to reauthorize FISA Section 702 in a spending bill – which would terminate all debate about reforms to this surveillance authority – you can be sure that Blackwell was heard.

“The number of FISA searches has skyrocketed with literally hundreds of thousands of warrantless searches per year – many of which involve Americans,” Blackwell wrote. “Even one abuse of a citizen’s constitutional rights must not be tolerated. When that number climbs into the thousands, Congress must step in.” What makes Blackwell’s appeal to Speaker Johnson unique is he went beyond including the reform efforts from conservative stalwarts such as House Judiciary Committee Chairman Jim Jordan and Rep. Andy Biggs of the Freedom Caucus. Blackwell also cited the support from the committee’s Ranking Member, Rep. Jerry Nadler, and Rep. Pramila Jayapal, who heads the House Progressive Caucus. Blackwell wrote: “Liberal groups like the ACLU support reforming FISA, joining forces with conservatives civil rights groups. This reflects a consensus almost unseen on so many other important issues of our day. Speaker Johnson needs to take note of that as he faces pressure from some in the intelligence community and their overseers in Congress, who are calling for reauthorizing this controversial law without major reforms and putting that reauthorization in one of the spending bills that will work its way through Congress this month.” That is sound advice for all Congressional leaders on Section 702, whichever side of the aisle they are on. In December, members of this left-right coalition joined together to pass reform measures out of the House Judiciary Committee by an overwhelming margin of 35 to 2. This reform coalition is wide-ranging, its commitment is deep, and it is not going to allow a legislative maneuver to deny Members their right to a debate. U.S. Treasury and FBI Targeted Americans for Political BeliefsThe House Judiciary Committee and its Select Subcommittee on the Weaponization of the Federal Government issued a report on Wednesday revealing secretive efforts between federal agencies and U.S. private financial institutions that “show a pattern of financial surveillance aimed at millions of Americans who hold conservative viewpoints or simply express their Second Amendment rights.”

At the heart of this conspiracy is the U.S. Treasury Department’s Financial Crimes Enforcement Network (FinCEN) and the FBI, which oversaw secret investigations with the help of the largest U.S. banks and financial institutions. They did not lack for resources. Law enforcement and private financial institutions shared customers’ confidential information through a web portal that connects the federal government to 650 companies that comprise two-thirds of the U.S. domestic product and 35 million employees. This dragnet investigation grew out of the aftermath of the Jan. 6 riot in the U.S. Capitol, but it quickly widened to target the financial transactions of anyone suspiciously MAGA or conservative. Last year we reported on how the Bank of America volunteered the personal information of any customer who used an ATM card in the Washington, D.C., area around the time of the riot. In this newly revealed effort, the FBI asked financial services companies to sweep their database to look for digital transactions with keywords like “MAGA” and “Trump.” FinCEN also advised companies how to use Merchant Category Codes (MCC) to search through transactions to detect potential “extremists.” Keywords attached to suspicious transactions included recreational stores Cabela’s, Bass Pro Shop, and Dick’s Sporting Goods. The committee observed: “Americans doing nothing other than shopping or exercising their Second Amendment rights were being tracked by financial institutions and federal law enforcement.” FinCEN also targeted conservative organizations like the Alliance Defending Freedom or the Eagle Forum for being demonized by a left-leaning organization, the Institute for Strategic Dialogue in London, as “hate groups.” The committee report added: “FinCEN’s incursion into the crowdfunding space represents a trend in the wrong direction and a threat to American civil liberties.” One doesn’t have to condone the breaching of the Capitol and attacks on Capitol police to see the threat of a dragnet approach that lacked even a nod to the concept of individualized probable cause. What was done by the federal government to millions of ordinary American conservatives could also be done to millions of liberals for using terms like “racial justice” in the aftermath of the riots that occurred after the murder of George Floyd. These dragnets are general warrants, exactly the kind of sweeping, indiscriminate violations of privacy that prompted this nation’s founders to enact the Fourth Amendment. If government agencies cannot satisfy the low hurdle of probable cause in an application for a warrant, they are apt to be making things up or employing scare tactics. If left uncorrected, financial dragnets like these will support a default rule in which every citizen is automatically a suspect, especially if the government doesn’t like your politics. When we covered a Michigan couple suing their local government for sending a drone over their property to prove a zoning violation, we asked if there are any legal limits to aerial surveillance of your backyard.

In this case before the Michigan Supreme Court, Maxon v. Long Lake Township, counsel for the local government said that the right to inspect our homes goes all the way to space. He described the imaging capability of Google Earth satellites, asking: “If you want to know whether it’s 50 feet from this house to this barn, or 100 feet from this house to this barn, you do that right on the Google satellite imagery. And so given the reality of the world we live in, how can there be a reasonable expectation of privacy in aerial observations of property?” One justice reacted to the assertion that if Google Earth could map a backyard as closely and intimately as a drone, that would be a search. “Technology is rapidly changing,” the justice responded. “I don’t think it is hard to predict that eventually Google Earth will have that capacity.” Now William J. Broad of The New York Times reports that we’re well beyond Google Earth’s imaging of barns and houses. Try dinner plates and forks. Albedo Space of Denver is making a fleet of 24 small, low orbit satellites that will use imagery to guide responders in disasters, such as wildfires and other public emergencies. It will improve the current commercial standard of satellite imaging from a focal length of about a foot to about four inches. A former CIA official with decades of satellite experience told Broad that it will be a “big deal” when people realize that anything they are trying to hide in their backyards will be visible. Skinny-dipping in the pool will only be for the supremely confident. To his credit, Albedo chief Topher Haddad said, “we’re acutely aware of the privacy implications,” promising that management will be selective in their choice of clients on a case-by-case basis. It is good to know that Albedo likely won’t be using its technology to catch zone violators or backyard sunbathers. We’ve seen, however, that what is cutting-edge technology today will be standard tomorrow. This is just one more way in which the velocity of technology is outpacing our ability to adjust. There is, of course, one effective response. We can reject the Michigan town’s counsel argument who said, essentially, that privacy’s dead and we should just get over it. Courts and Congress should define orbital and aerial surveillance as searches requiring a probable cause warrant, as defined by the Fourth Amendment of the U.S. Constitution, before our homes and backyards can be invaded by eyes from above. The greatest danger to privacy is not that Albedo will allow government snoops to watch us in real time. The real threat is a satellite company’s ability to collect private images by the tens of millions. Such a database could then be sold to the government just as so much commercial digital information is now being sold to the government by data brokers. This is all the more reason for Congress to import the privacy-protecting warrant provisions of the Fourth Amendment Is Not For Sale Act into the reauthorization of FISA Section 702. In the last century, the surveillance state was held back by the fact that there could never be enough people to watch everybody. Whether Orwell’s fictional telescreens or the East German Stasi’s apparatus of civilian informants, there could simply never be enough watchers to follow every dissident, while having even more people to put all the watcher’s information together (although the Stasi’s elaborate filing system came as close as humanly possible to omniscience).

Now, of course, AI can do the donkey work. It can decide when a face, or a voice, a word, or a movement, is significant and flag it for a human intelligence officer. AI can weave data from a thousand sources – cell-site simulators, drones, CCTV, purchased digital data, and more – and thereby transform data into information, and information into actionable intelligence. The human and institutional groundwork is already in place to feed AI with intelligence from local, national, and global sources in more than 80 “fusion centers” around the country. These are sites where the National Counterterrorism Center coordinate intelligence from the 17 federal intelligence agencies with local and state law enforcement. FBI, NSA, and Department of Homeland Security intelligence networks get mixed in with intelligence from the locals. If you’ve ever reported something suspicious to the “if you see something, say something” ads, a fusion center is where your report goes. With terrorists and foreign threats ever present, it makes sense to share intelligence between agencies, both national and local. But absent clear laws and constitutional limits, we are also building the basics of a full-fledged surveillance state. With no warrant requirements currently in place for federal agencies to inspect Americans’ purchased digital data, there is nothing to stop the fusion of global, national, and local intelligence from a thousand sources into one ever-watchful eye. Step by step, day by day, new technologies, commercial entities and government agencies add new sources and capabilities to this ever-present surveillance. The latest thread in this weave comes from Axon, the maker of Tasers and body cameras for police. Axon has just acquired Fusus, which grants access to the camera networks of shopping centers, hospitals, residential communities, houses of worship, schools, and urban environments for more than 250 police “real-time crime centers.” Weave that data with fusion centers, and voilà, you are living in a Panopticon – a realm where you are always seen and always heard. To make surveillance even more thorough, Axon’s body cameras are being sold to healthcare and retail facilities to be worn by employees. Be nice to your nurse. Such daily progress in the surveillance state provides all the more reason for the U.S. House in its debate over the reauthorization of FISA Section 702 to include a warrant requirement before the government can freely swim in this ocean of data – our personal information – without restraint. David Pierce has an insightful piece in The Verge demonstrating the latest example of why every improvement in online technology leads to a yet another privacy disaster.

He writes about an experiment by OpenAI to make ChatGPT “feel a little more personal and a little smarter.” The company is now allowing some users to add memory to personalize this AI chatbot. Result? Pierce writes that “the idea of ChatGPT ‘knowing’ users is both cool and creepy.” OpenAI says it will allow users to remain in control of ChatGPT’s memory and be able to tell it to remove something it knows about you. It won’t remember sensitive topics like your health issues. And it has a temporary chat mode without memory. Credit goes to OpenAI for anticipating the privacy implications of a new technology, rather than blundering ahead like so many other technologists to see what breaks. OpenAI’s personal memory experiment is just another sign of how intimate technology is becoming. The ultimate example of online AI intimacy is, of course, the so-called “AI girlfriend or boyfriend” – the artificial romantic partner. Jen Caltrider of Mozilla’s Privacy Not Included team told Wired that romantic chatbots, some owned by companies that can’t be located, “push you toward role-playing, a lot of sex, a lot of intimacy, a lot of sharing.” When researchers tested the app, they found it “sent out 24,354 ad trackers within one minute of use.” We would add that data from these ads could be sold to the FBI, the IRS, or perhaps a foreign government. The first wave of people whose lives will be ruined by AI chatbots will be the lonely and the vulnerable. It is only a matter of time before sophisticated chatbots become ubiquitous sidekicks, as portrayed in so much near-term science fiction. It will soon become all too easy to trust a friendly and helpful voice, without realizing the many eyes and ears behind it. Just in time for the Section 702 debate, Emile Ayoub and Elizabeth Goitein of the Brennan Center for Justice have written a concise and easy to understand primer on what the data broker loophole is about, why it is so important, and what Congress can do about it.

These authors note that in this age of “surveillance capitalism” – with a $250 billion market for commercial online data – brokers are compiling “exhaustive dossiers” that “reveal the most intimate details of our lives, our movements, habits, associations, health conditions, and ideologies.” This happens because data brokers “pay app developers to install code that siphons users’ data, including location information. They use cookies or other web trackers to capture online activity. They scrape from information public-facing sites, including social media platforms, often in violation of those platforms’ terms of service. They also collect information from public records and purchase data from a wide range of companies that collect and maintain personal information, including app developers, internet service providers, car manufacturers, advertisers, utility companies, supermarkets, and other data brokers.” Armed with all this information, data brokers can easily “reidentify” individuals from supposedly “anonymized” data. This information is then sold to the FBI, IRS, the Drug Enforcement Administration, the Department of Defense, the Department of Homeland Security, and state and local law enforcement. Ayoub and Goitein examine how government lawyers employ legal sophistry to evade a U.S. Supreme Court ruling against the collection of location data, as well as the plain meaning of the U.S. Constitution, to access Americans’ most personal and sensitive information without a warrant. They describe the merits of the Fourth Amendment Is Not For Sale Act, and how it would shut down “illegitimately obtained information” from companies that scrape photos and data from social media platforms. The latter point is most important. Reformers in the House are working hard to amend FISA Section 702 with provisions from the Fourth Amendment Is Not For Sale Act, to require the government to obtain warrants before inspecting our commercially acquired data. While the push is on to require warrants for Americans’ data picked up along with international surveillance, the job will be decidedly incomplete if the government can get around the warrant requirement by simply buying our data. Ayoub and Goitein conclude that Congress must “prohibit government agencies from sidestepping the Fourth Amendment.” Read this paper and go here to call your House Member and let them know that you demand warrants before the government can access our sensitive, personal information. The word from Capitol Hill is that Speaker Mike Johnson is scheduling a likely House vote on the reauthorization of FISA’s Section 702 this week. We are told that proponents and opponents of surveillance reform will each have an opportunity to vote on amendments to this statute.

It is hard to overstate how important this upcoming vote is for our privacy and the protection of a free society under the law. The outcome may embed warrant requirements in this authority, or it may greatly expand the surveillance powers of the government over the American people. Section 702 enables the U.S. intelligence community to continue to keep a watchful eye on spies, terrorists, and other foreign threats to the American homeland. Every reasonable person wants that, which is why Congress enacted this authority to allow the government to surveil foreign threats in foreign lands. Section 702 authority was never intended to become what it has become: a way to conduct massive domestic surveillance of the American people. Government agencies – with the FBI in the lead – have used this powerful, invasive authority to exploit a backdoor search loophole for millions of warrantless searches of Americans’ data in recent years. In 2021, the secret Foreign Intelligence Surveillance Court revealed that such backdoor searches are used by the FBI to pursue purely domestic crimes. Since then, declassified court opinions and compliance reports reveal that the FBI used Section 702 to examine the data of a House Member, a U.S. Senator, a state judge, journalists, political commentators, 19,000 donors to a political campaign, and to conduct baseless searches of protesters on both the left and the right. NSA agents have used it to investigate prospective and possible romantic partners on dating apps. Any reauthorization of Section 702 must include warrants – with reasonable exceptions for emergency circumstances – before the data of Americans collected under Section 702 or any other search can be queried, as required by the U.S. Constitution. This warrant requirement must include the searching of commercially acquired information, as well as data from Americans’ communications incidentally caught up in the global communications net of Section 702. The FBI, IRS, Department of Homeland Security, the Pentagon, and other agencies routinely buy Americans’ most personal, sensitive information, scraped from our apps and sold to the government by data brokers. This practice is not authorized by any statute, or subject to any judicial review. Including a warrant requirement for commercially acquired information as well as Section 702 data is critical, otherwise the closing of the backdoor search loophole will merely be replaced by the data broker loophole. If the House declines to impose warrants for domestic surveillance, expect many politically targeted groups to have their privacy and constitutional rights compromised. We cannot miss the best chance we’ll have in a generation to protect the Constitution and what remains of Americans’ privacy. Copy and paste the message below and click here to find your U.S. Representative and deliver it: “Please stand up for my privacy and the Fourth Amendment to the U.S. Constitution: Vote to reform FISA’s Section 702 with warrant requirements, both for Section 702 data and for our sensitive, personal information sold to government agencies by data brokers.” Government Agencies Pose as Ad Bidders We’ve long reported on the government’s purchase of Americans’ sensitive and personal information scraped from our apps and sold to federal agencies by third-party data brokers. Closure of this data broker loophole is included in the House Judiciary Committee bill – the Protect Liberty and End Warrantless Surveillance Act – legislation that requires probable cause warrants before the federal government can inspect Americans’ data caught up in foreign intelligence under Section 702 of the Foreign Intelligence Surveillance Act. Of no less importance, the bipartisan Protect Liberty Act also requires warrants for inspection of the huge mass of Americans’ data sold to the government.

Thanks to Ben Lovejoy of the 9 to 5 Mac, we now know of the magnitude of the need for a legislative solution to this privacy vulnerability. Apple’s 2020 move to require app makers to notify you that you’re being tracked on your iPhone has been thoroughly undermined by a workaround through the technology of device fingerprinting. Add to that Patternz, a commercial spyware that extracts personal information from ads and push notifications so it can be sold. Patternz tracks up to 5 billion users a day, utterly defeating phone-makers’ attempts to protect consumer privacy. How does it work? 404 Media demonstrated that Patternz has deals with myriad small ad agencies to extract information from around 600,000 apps. In a now-deleted video, an affiliate of the company boasted that with this capability, it could track consumers’ locations and movements in real time. After this article was posted, Google acted against one such market participant, while Apple promises a response. But given the robustness of these tools, it is hard to believe that new corporate policies will be effective. That is because technology allows government agencies to pose as ad buyers to turn adware into a global tracking tool that federal agencies – and presumably the intelligence services of other governments – can access at will. Patternz can even install malware for more thorough and deeper penetration of customers’ phones and their sensitive information. It is almost as insidious as the zero-day malware Pegasus, transforming phones into 24/7 spy devices. Enter Patrick Eddington, senior fellow of the Cato Institute. He writes: “If you’re a prospective or current gun owner and you use your smartphone to go to OpticsPlanet to look for a new red dot sight, then go to Magpul for rail and sling adapters for the modern sporting rifle you’re thinking of buying, then mosey on over to LWRC to look at their latest gas piston AR-15 offerings, and finally end up at Ammunition Depot to check out their latest sale on 5.56mm NATO standard rounds, unless those retailers expressly offer you the option ‘Do not sell my personal data’ … all of your online browsing and ordering activity could end up being for sale to a federal law enforcement agency. “Or maybe even the National Security Agency.” The government’s commercial acquisition of Americans’ personal information from data sales contains troubling implications for both left and right – from abortion-rights activists concerned about women being tracked to clinics, to conservatives who care about the implications of this practice for the Second Amendment or free religious expression, to Americans of all stripes who don’t want our personal and political activities monitored in minute detail by the government. In January, the NSA admitted that it buys our personal information without a warrant. The investigative work performed by 404 Media and 9 to 5 Mac should give Members of Congress all the more reason to support the Protect Liberty Act. We recently celebrated the decision by Amazon to require police to present a warrant before going through the “Request for Assistance” tool to seek video footage from the neighborhood networks of Ring cameras owners. We touted this is as a significant victory for privacy.

And it is – it effectively neutralized more than 2,300 agreements Amazon had with local police and fire departments to help them obtain private security footage – but it wasn’t quite as big a deal as we first thought. Thanks to reporting from Baylee Bates of KCEN News in Temple, Texas, Amazon’s change is prompting a big yawn from police. Why? A spokeswoman for the Temple Police Department told Bates that officers had found greater success in requests for video footage by making door-to-door contacts. “We have found throughout the years of gathering the security footage that going to residents, business owners, that face-to-face interaction with people has been way more successful for us,” said Megan Price of the Temple PD. A spokesman for the Bell County Sheriff’s Department told Bates that Amazon’s policy change “doesn’t stop us from going individual-to-individual and talking, the way we prefer to do it anyway.” Since the introduction of Ring, customers have for the most part complied with police requests for videos. If someone set fire to a car in our neighborhood, or burgled a house across the street, we would do the same. But the eagerness of most people to grant police access to surveillance video is concerning, as more neighborhoods become “ringed” with surveillance. Three years ago, a Washington Post story quoted a mother in California telling her seven-year-old son, “Every time you ride your bike down this block, there are probably 50 cameras that watch you going past. If you make a bad choice, those cameras will catch you.” We wrote at the time that George Orwell never imagined millions of Ring cameras – and millions of users willing to hand over video when asked. The threat to privacy from neighborhood surveillance is as much about audio recordings as it is video. Sen. Edward Markey (D-MA) assessed the risk of a surveillance network in every neighborhood in a letter to Amazon in 2022: “This surveillance system threatens the public in ways that go far beyond abstract privacy invasion: individuals may use Ring devices’ audio recordings to facilitate blackmail, stalking, and other damaging practices. As Ring products capture significant amounts of audio on private and public property adjacent to dwellings with Ring doorbells – including recordings of conversations that people reasonably expect to be private – the public’s right to assemble, move, and converse without being tracked is at risk.” At least you can always step inside, away from the microphones and the cameras, settle into your chair, and let Alexa take over your surveillance. Cato Institute Senior Fellow Patrick Eddington filed a Freedom of Information Act request against the Department of Defense this week asking two questions.

First, what was the scope and duration of Pentagon aerial surveillance deployed over domestic protesters in the last year of the Trump Administration? Second, why is the Biden Administration shielding those records from the public? In the world of civil liberties critics of the American surveillance state, Patrick Eddington has the pedigree of highly credentialed practitioner. From 1988 to 1996, Eddington was a military imagery analyst at the CIA’s National Photographic Interpretation Center, where he was officially recognized many times for his work. He’s the real deal. So is former Rep. Adam Kinzinger (R-IL), who knows what he’s talking about when it comes to surveillance aircraft. Kinzinger flew the RC-26 surveillance aircraft in Iraq, which had a complement of sensors that records people and objects with video, in both visible and infrared frequencies. Eddington reports that from 2020 to 2021, the Pentagon used RC-26B turboprop planes to surveil American protesters. Many of these aircraft have been deployed widely across the country, from Alabama to Washington State. Kinzinger has written that the RC-26 “could fly fast and low, capturing the signals from thousands of cellphones … With the right coordination, the target could be reached in minutes, not hours.” The Air Force Inspector General reports that the digital data packages Kinzinger referred to were removed for the RC-26s deployed for domestic use. Even so, the sensor package of this fleet still represents an astonishing surveillance capability of Americans on the ground. Writing in The Orange County Register, Eddington asks if the Biden Administration’s stonewalling means it reserves the right to use the fleet against pro-Trump protesters? Or for a new Trump Administration in tracking anti-Trump protesters? Or for some future president against any political enemy? Behind the intense rancor in American partisan politics, at least one aspiration unites administrations of both parties – an intent to surveil. PPSA will report on further developments. The first deepfake of this long-anticipated “AI election” happened when a synthetic Joe Biden made robocalls to New Hampshire Democrats urging them not to vote in that presidential primary. “It’s important that you save your vote for the November election,” fake Biden told Democrats. Whoever crafted this trick expected voters to believe that a primary vote would somehow deplete a storehouse of general-election votes.

Around the same time, someone posted AI-generated fake sexual images of pop icon Taylor Swift, prompting calls for laws to curb and punish the use of this technology for harassment. Other artists are calling for protections not of their visage, but of their intellectual property, with paintings and photographs being expropriated as grist for AI’s mill. Members of Congress and state legislators are racing to pass laws to make such tricks and appropriations a crime. It certainly makes sense to criminalize the cheating of voters by making candidates appear to say and do things they would never say or do. But sweeping legislation also poses dangers to the First Amendment rights of Americans, including crackdowns on what is clearly satire – such as a clear joke image of a politician in the inset behind the “Weekend Update” anchors of Saturday Night Live. Such caution is needed as pressure for legislative action grows with the proliferation of deepfakes. Even among non-celebrities, this technology is used to create sexually abusive material, commit fraud, and harass individuals. According to Control AI, a group concerned about the current trajectory of artificial intelligence, such technology is now widely available. All someone needs to create a compelling deepfake is a photo of you or a short recording of your voice, which most of us have already very helpfully posted online. Control AI claims that an overwhelming 96 percent of deepfake videos are sexually abusive. And they are becoming more common – 13 times as many deepfakes were created in 2023 as in 2022. Meanwhile, only 42 percent of Americans even know what a deepfake is. The day is fast approaching when anyone can create a convincing fake sex tape of a political candidate, or a presidential candidate announcing the suspension of his campaign on the eve of an election, or a fake video of a military general declaring martial law. A few weeks ago, a convincing fake video of the Louvre museum in Paris on fire went viral, alarming people around the world. With two billion people poised to vote in major elections around the globe this year, deepfake technology is positioned to brew distrust and wreak some havoc. While the Biden campaign has the resources to quicky refute the endless stream of fake photos and videos, the average American does not. A fake sex tape of a work colleague could burn through the internet before she has a chance to refute it. An AI-generated voice recording could be used to commit fraud, while even a fake photo could do immense damage. And if you thought forcing AI to include a watermark in whatever it produces, think again. Control AI points out that it is simply impossible to create watermarks that cannot be removed easily by AI. Many strategies to stop deepfakes are about as effective as trying to keep kids off their parents’ computer. It is unrealistic to believe we can slow down the evolution of artificial intelligence, as Control AI proposes to do. Certainly America’s enemies can be counted on to use AI to their advantage. Putting AI behind a government lock and key stifles the massive innovation that AI promises to bring, gives a technological edge to Russia and China, while also giving sole use of the technology to the federal government. That, too, poses serious problems for surveillance and oversight. Given the First and Fourth Amendment implications, Congress should not act in haste. Congress should start the long and difficult conversation about how best to contain AI’s excesses, while best benefitting from its promise in human health and wealth creation. Congress should continue to hold hearings and investigate solutions. Meanwhile, the best guard against AI is a public that is already deeply skeptical of conventional information encountered online. As more Americans learn what a deepfake is, the less impact these images will have. We reported last week that the Biden Administration leaked the news that it is drafting an executive order to restrict “countries of concern” from acquiring Americans’ most sensitive and personal digital and DNA information.

At the top of the Administration’s concern is the likely acquisition by the People’s Republic of China of a vast databank of Americans’ DNA from Chinese-owned companies that perform genetic testing for U.S. healthcare. Should we care? A glimpse of the dangers of such tracking can be seen in how China uses mass DNA mapping of whole populations to track and persecute religious minorities. At a recent conference in Washington, D.C., on surveillance of religious minorities in China, we heard evidence – well documented by many journalists – that China is using facial recognition (with racial filters), car sensors, cell-site simulators, and location tracking to systematically surveil that country’s Uighur Muslim and Tibetan Buddhist minorities. A recent Human Rights Watch whitepaper details how China’s authorities are systematically collecting DNA in Tibet. The cover story for one such program for people aged 12 to 65 is that the government is performing a health-check program called Physicals For All – though patients are not allowed to learn the results of any of their tests. DNA testing in Tibet is, to paraphrase the Godfather, an offer that cannot be refused. With this data, the government can track people by ethnicity, and map their families by their genes and presumed beliefs. The most pernicious aspect of this program is the collection of children’s DNA, a unique identifier that will never change. Such genetic surveillance also necessarily connects a whole bloodline to one person suspected of religious dissidence – what Chinese police call “one household, one file.” Such files can be used to track people who lead worship services or advocate religious or secular views not approved by the government. This program of police-community relations is called “spreading information tentacles.” DNA is also used to identify (and presumably, from samples located at a given site or shrine) to track clerics and lamas, village elders, and others who might be engaged in meetings or conducting unofficial mediation of local disputes. Combined with electronic surveillance, authorities can detect forbidden material accessed by phones and other devices, and then turn to DNA mapping to break up social and religious organizations, keeping civil society atomized before the state. Thus, China’s DNA database is amplified by many forms of electronic surveillance, with artificial intelligence putting together patterns of association and blood relations for police. Researcher Adrian Zenz told The Bulletin of Atomic Scientists that in 2017 alone, China spent almost $350 billion on internal security outlays. According to the Biden Administration, China is also spending money to purchase Americans’ data. This could include medical, financial, occupational, familial, and romantic profiles: genetic surveillance provides another tile that forms a mosaic of the American population for China. Thus, an American child sequenced for a medical test today could have his or her genetic health profile and identity known to the Chinese state for life. As the Office of the Director of National Intelligence warns: “The loss of your DNA not only affects you, but your relatives and, potentially, generations to come.” |

Categories

All

|

RSS Feed

RSS Feed