|

The New York Times broke the story that a front company in New Jersey signed a secret contract with the U.S. government in November 2021 to help it gain access to the powerful surveillance tools of Israel’s NSO Group.

PPSA previously reported that the FBI had acquired NSO’s signature technology, Pegasus, which can infiltrate a smartphone, strip all its data, and transform it into a 24/7 surveillance device. Mark Mazzetti and Ronen Bergman of The Times now report that the FBI in recent years had performed tests on defenses against Pegasus and “to test Pegasus for possible deployment in the bureau’s own operations inside the United States.” An FBI spokesperson told these journalists the FBI’s version of the software is now inactive. The secret contract also grants the U.S. government access to NSO’s powerful geolocation tool called Landmark. Mazzetti and Ronen report that such NSO technology has been used thousands of time against targets in Mexico – and that Mexico is named as a venue for the use of NSO technology. Two sources told the journalists that the “contract also allows for Landmark to be used against mobile numbers in the United States, although there is no evidence that has happened.” This story is catching the Biden Administration flat-footed, which had declared this technology a national security threat while placing NSO on a Commerce Department blacklist. In light of these new revelations, Members of Congress should ask the Directors of National Intelligence, the CIA, FBI, and DEA:

This breaking story will likely force the Biden White House to promulgate new rules limiting the use of NSO technology by federal law enforcement and intelligence agencies. As it does, Congress should be involved every step of the way. This technology is frightening because NSO tools can be installed remotely on smartphones with the most updated security software, and without the user succumbing to phishing or any other obvious form of attack. The need for a detailed policy limiting the use of these tools is urgent. NSO technology is to ordinary surveillance what nuclear weapons are to conventional weapons. Because nuclear weapons are hard to make, Washington, D.C. had time to plan and enact a global non-proliferation regime that delayed their proliferation. In the case of Pegasus and Landmark, however, this technology easily proliferated in the wild before Washington was even fully aware of its existence. Pegasus has been used by drug cartels to track down and murder journalists. It has been used by an African government to listen in on conversations between the daughter of a kidnapped man and the U.S. State Department. It was famously used to plan the murder of Adnan Khashoggi. Does anyone doubt that Russian and Chinese intelligence have secured their own copies? Now Washington is both racing to catch up with foreign adversaries and limit the use of this technology at the same time. NSO, through its amoral proliferation of dangerous technology, has made the world a riskier place. As federal agencies seek to get their hands on this technology, Congress should paint a bright red line – DO NOT USE DOMESTICALLY, EVER. Never let a moral panic go to waste. A legitimate concern – the likely exposure of 150 million Americans’ data from TikTok to the People’s Republic of China – somehow morphed into the Restrict Act, which never mentions TikTok.

Supported across the ideological spectrum by Sens. Mark Warner, Joe Manchin, Mitt Romney, and Shelley Moore Capito, the Restrict Act would transform a rather innocuous figure, the Secretary of Commerce, into an American Cardinal Richelieu. The bill would empower the Secretary of Commerce “to review and prohibit certain transactions between persons in the United States and foreign adversaries” regarding virtually all hardware and software communications technology, as well as data storage, machine learning, predictive analytics, and data science providers. The bill also covers software for desktops, mobile applications, gaming, payment, and web-based applications. The bill labels the People’s Republic of China, as well as Cuba, Iran, Russia, North Korea, and Venezuela as foreign adversaries, which they certainly are. But it would grant the Secretary the power to consult with the Director of National Intelligence to name any country’s technology as a national security threat. In addition, under the bill the Secretary would have the power to ban “entities” held by hostile foreign labor unions, equity investors, partnerships or of “any participation […] and of any character.” It covers just about every aspect of technology and e-commerce with a potentially foreign connection. The Secretary of Commerce, usually known for cutting ribbons and handing out awards, would acquire duties commonly associated with the CIA’s Clandestine Service. Commerce Secretary Gina Raimondo would be empowered to “identify, deter, disrupt, prevent, prohibit, investigate, or otherwise mitigate” not just the “information and communications technology products” listed above, but also anything that could be construed to involve “Federal elections” and “national security.” The bill also targets “the digital economy,” presumably meaning the Secretary of Commerce could, with the stroke of a pen and no further debate in Congress, deter, disrupt, or prevent Bitcoin and other cryptocurrencies. Americans who violate these restrictions would face up to $1 million in fines and 20 years in prison. And violate what, exactly? This bill is as vague as it is repressive. As Elizabeth Nolan Brown of Reason notes, the Restrict Act could easily be interpreted as criminalizing virtual private networks, which enable Americans’ privacy. Thus, teenagers who use VPNs to watch burping contests on TikTok could face a $1 million fine and 20 years in prison. “We’ve seen many times the way federal laws are sold as attacks on big baddies like terrorists and drug kingpins yet wind up used to attack people engaged in much more minor activities,” Brown wrote. Or, as Cardinal Richelieu said, “If you give me six lines written by the hand of the most honest of men, I will find something in them which will hang him.” As extreme as it is, it would be a mistake to discount the Restrict Act. The White House is strongly backing it. On Capitol Hill, this legislation has momentum, surfing on the cresting wave of indignation after the poorly received testimony of TikTok’s CEO. And if it falls short, it reveals a deep state hunger for power that will surely find expression in smaller, more passable bills. Which raises the question: If we reject the Restrict Act, what should be done about TikTok? As civil libertarians, we find ourselves at a point of agony concerning the proposed banning of TikTok’s social media platform in the United States. We don’t like government having the power to pull down a vibrant ecosystem of speech, one with many minority viewpoints, and on which small businesses and influencers depend. Yet TikTok’s promise to quarantine Americans’ data from ByteDance, its Chinese owner, is risible. ByteDance must comply with a Chinese law that mandates sharing data with Chinese intelligence. As we’ve pointed out, TikTok has repeatedly violated its own standards – most recently by allegedly surveilling American journalists in a likely attempt to catch dissidents or whistleblowers. Nor would “Project Texas” – TikTok’s proposal to house American data, most likely with Oracle in Austin – be a foolproof way to quarantine Americans’ data from China. Vigilance about government surveillance must include all governments. As overbearing as Washington can be, nothing compares to the malevolence of the Chinese Communist Party toward the United States and the American people. For that reason, it makes sense to ban TikTok or force a sale under current sanctions law or a narrowly targeted bill. But the Restrict Act gives overreaction a bad name. It would be a rich irony if Congress protected Americans from the importation of Chinese surveillance by turning the Department of Commerce into the technology police, enforcing vague laws with sweeping investigatory power. The Restrict Act is a blueprint for tyranny. Realtors will tell you that the price of a home is all about location, location, location. But in surveillance policy, location is not the only thing that matters.

In a recent Senate hearing, Sen. Ron Wyden (D-OR) asked FBI Director Christopher Wray about reports the Bureau was purchasing Americans’ location data. Wray replied that the FBI does not “purchase communications database information that includes location data derived from internet advertisement.” The FBI, Director Wray explained, did purchase such information at some unspecified time in the past, but that was part of a since-discontinued pilot program. A few days later, Department of Justice Inspector General Michael Horowitz testified on the Hill that warrantlessly purchasing location data, in the wake of the 2018 Supreme Court Carpenter opinion, should be considered off-limits. So far, so good. But what about the questions not asked? Our devices generate a lot more information about us than just our location and movements. Data reveal our networks of friends and associates, political beliefs, religious beliefs and worship, sexual lives and preferences, and other deeply sensitive information – the sort of “data” that snoops once had to pick the lock of a diary to learn. The first of these other questions we’d love to ask Director Wray: Is the Bureau still purchasing other sensitive data on Americans? This question comes to mind after Vice’s Motherboard tech blog revealed a contract showing that in 2017 the FBI paid more than $76,000 through a middleman to purchase “netflow” data from a data broker, Team Cymru, which obtained it from internet service providers. This purchase for “netflow” data can include which server communicated with another, giving the FBI the ability to track internet traffic through virtual private networks. It can include websites visited and cookies, digital details that can collectively form a portrait of a user. This purchase was made for the FBI’s Cyber Crime division in 2017. Some more questions for Director Wray:

It was recently revealed that the FBI made 204,090 U.S. person queries from NSA databases – equivalent to a warrantless search of every citizen of Richmond, Virginia. Director Wray should face these questions in his next hearing. A fuller explanation of what kinds of warrantless data the FBI extracts and uses is, after all, minimal for Congressional oversight. In his frequent testimonies on the Hill, Department of Justice Inspector General Michael Horowitz is – by the standards of Washington, D.C. – refreshingly frank. In a House hearing last week, Rep. Ben Cline (R-VA) asked Horowitz penetrating questions and received some intriguing answers. (Go to the 2:30 mark.) Cline noted that in the Senate’s recent annual worldwide threats hearing, FBI Director Christopher Wray divulged that the Bureau has in the past bought Americans’ precise geolocation data derived from mobile phone advertising, before backing away from the practice in the face of thorny legal issues.

Cline then asked Horowitz: “Have other parts of the DOJ purchased or are other parts of the DOJ still purchasing this kind of information about Americans?” Horowitz replied, “we’re looking at that, we saw those news stories.” He promised to follow up with answers. “Needless to say,” Cline said that if such surveillance is still occurring, “that would be very disturbing.” Cline then asked: “How did the FBI and potentially other elements of the DOJ come to believe that buying location information about Americans without a warrant would be legal, especially after the Supreme Court’s decision in U.S. v. Carpenter (2018), which held that Americans’ location information is protected by the Fourth Amendment?” “I think it raises precisely the issues you mention,” Horowitz replied. “What I am supposing may have happened is that before Carpenter, of course, the department took advantage at least in some instances of the ambiguity in the law. Post-Carpenter that of course shouldn’t have happened.” Cline then asked if the inspector general knew of other parts of DOJ buying similar sensitive information, such as communications or internet records. Horowitz replied that he is following up on an inquiry from Sen. Ron Wyden (D-OR) about a surveillance program run through the office of the Attorney General of Arizona. This is a reference to an unorthodox surveillance scheme in which a unit of the Department of Homeland Security surveilled wire money transfers of Americans above $500 – some to Mexico, some within the United States. This bulk data was centralized in a non-profit, private-sector organization, which shared it with hundreds of federal, state, and local law enforcement agencies. “These are issues on our radar,” Horowitz said. So in the span of three minutes, the Department of Justice Inspector General unambiguously affirmed that warrantless access by federal agencies of location data would violate a Supreme Court ruling, promised to report on whether agencies are illicitly buying Americans’ location data, as well as to report on the Arizona wire-money transfer surveillance. Rep. Cline concluded his questioning by telling Horowitz that he has “a track record of achieving compliance from agencies that otherwise are reluctant to provide it.” The congressman said he hoped Horowitz would continue to be aggressive, because it “doesn’t seem that the message is getting through.” In “A Scanner Darkly,” a 2006 film based on a Philip K. Dick novel, Keanu Reeves plays a government undercover agent who must wear a “scramble suit” – a cloak that constantly alters his appearance and voice to avoid having his cover blown by ubiquitous facial recognition surveillance.

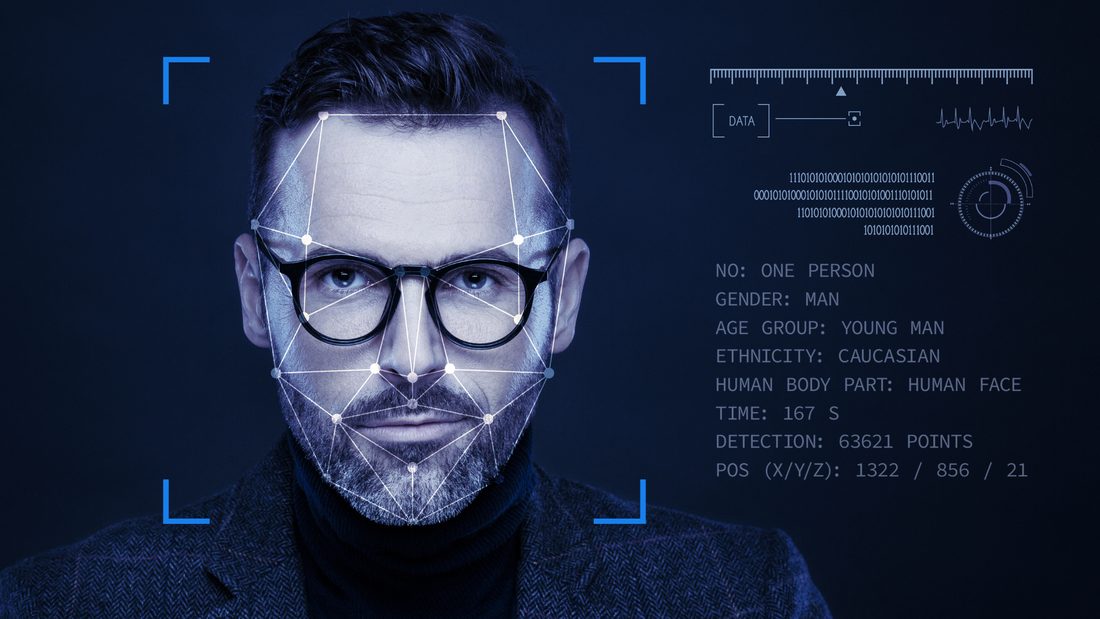

At the time, the phrase “ubiquitous facial recognition surveillance” was still science fiction. Such surveillance now exists throughout much of the world, from Moscow, to London, to Beijing. Scramble suits do not yet exist, and sunglasses and masks won’t defeat facial recognition software (although “universal perturbation” masks sold on the internet purport to defeat facial tracking). Now that companies like Clearview AI have reduced human faces to the equivalent of personal ID cards, the proliferation of cameras linked to robust facial recognition software has become a privacy nightmare. A year ago, PPSA reported on a technology industry presentation that showed how stationary cameras could follow a man, track his movements, locate people he knows, and compare all that to other data to map his social networks. Facial recognition doesn’t just show where you went and what you did: it can be a form of “social network analysis,” mapping networks of people associated by friendship, work, romance, politics, and ideology. Nowhere is this capability more robust than in the People’s Republic of China, where the surveillance state has reached a level of sophistication worthy of the overused sobriquet “Orwellian.” A comprehensive net of data from a person’s devices, posts, searches, movements, and contacts tells the government of China all it needs to know about any one of 1.3 billion individuals. That is why so many civil libertarians are alarmed by the responses to an ACLU Freedom of Information (FOIA) lawsuit. The Washington Post reports that government documents released in response to that FOIA lawsuit show that “FBI and Defense Department officials worked with academic researchers to refine artificial-intelligence techniques that could help in the identification or tracking of Americans without their awareness or consent.” The Intelligence Advanced Research Projects agency, a research arm of the intelligence community, aimed in 2019 to increase the power of facial recognition, “scaling to support millions of subjects.” Included in this is the ability to identify faces from oblique angles, even from a half-mile away. The Washington Post reports that dozens of volunteers were monitored within simulated real-world scenarios – a subway station, a hospital, a school, and an outdoor market. The faces and identities of the volunteers were captured in thousands of surveillance videos and images, some of them captured by drone. The result is an improved facial recognition search tool called Horus, which has since been offered to at least six federal agencies. An audit by the Government Accountability Office found in 2021 that 20 federal agencies, including the U.S. Post Office and the Fish and Wildlife Service, use some form of facial recognition technology. In short, our government is aggressively researching facial recognition tools that are already used by the Russian and Chinese governments to conduct the mass surveillance of their peoples. Nathan Wessler, deputy director of the ACLU, said that the regular use of this form of mass surveillance in ordinary scenarios would be a “nightmare scenario” that “could give the government the ability to pervasively track as many people as they want for as long as they want.” As we’ve said before, one does not have to infer a malevolent intention by the government to worry about its actions. Many agency officials are desperate to catch bad guys and keep us safe. But they are nevertheless assembling, piece-by-piece, the elements of a comprehensive surveillance state. In today’s public hearing before the U.S Senate Select Committee on Intelligence, Sen. Mike Rounds (R-SD) asked FBI Director Christopher Wray about the need to reauthorize Section 702 authority of the Foreign Intelligence Surveillance Act.

This question was asked in the shadow of a Wall Street Journal story last year reporting that the FBI had conducted up to 3.4 million U.S. person queries in 2021, or warrantless searches of Americans’ personal data from the 702 database. At the time, the FBI cautioned on background that the number was inflated by the inclusion of Americans’ data in an effort to protect these potential victims from cyberattacks from China, Russia, and other hostile countries. In today’s session, Director Wray said the FBI is “surgical and judicious” in its searches, making big strides in its database systems and training to minimize such intrusions. Director Wray further asserted that in 2022, the Bureau had achieved a 93 percent reduction in such U.S. person queries. This apparently includes the elimination of those cases that fall in the cyber category. Shortly after, Charlie Savage of The New York Times reported that a senior FBI official clarified that the actual number was shy of 204,090. In other words, the FBI director today admitted that the Bureau had compromised the Fourth Amendment rights of Americans about 204,000 times in just one year, or about 559 times per day. To put this in comparative terms, Sen. Rounds might want to consider that this number equals the total population of South Dakota’s largest city – Sioux Falls – plus the small city of Aberdeen. PCLOB Board Member: Section 702 Domestic Searches of Americans of “Minimal to Negligible” Value3/7/2023

Travis LeBlanc, board member of the U.S. Privacy and Civil Liberties Oversight Board (PCLOB), takes his position as a privacy watchdog seriously. Until the appointment of Sharon Bradford Franklin as PCLOB Chair, LeBlanc was the lone voice of public criticism and questioning of the largely secret activities of the intelligence community.

Expectations for PCLOB have long been low. A report on a surveillance authority, Executive Order 12333, was six years in the making. The public-facing version turned out to be a high school-level paper that seemed written out of Wikipedia. In June 2021, LeBlanc went public with his dissatisfaction with PCLOB’s timidity to explore contentious issues, such as 12333 and a program called XKEYSCORE that allows the NSA to sweep the global internet. PCLOB of late has been showing its colors as an independent agency. It has long examined Section 702 of the Foreign Intelligence Surveillance Act, which allows intelligence agencies to carry out warrantless data collection. In recent years, there has been mounting evidence that the FBI has used Section 702 data as a “backdoor search” tool to warrantlessly locate information about Americans. The Office of the Director of National Intelligence has reported that the FBI has conducted up to 3.4 million searches for U.S. persons in the body of 702 data. On Monday, LeBlanc appeared at the State of the Net Conference in Washington, reported by cyberscoop.com. “We have a large number of compliance issues that we’ve seen over the years and the compliance issues particularly around U.S. person queries are quite significant,” LeBlanc said, expressing concern about Congress renewing this authority without serious reforms. He suggested Congress should consider adding a warrant process for searches of Americans. Most interesting of all, LeBlanc said there are “minimal to negligible examples of the value” of these domestic searches. His statement rebuts the claim in January by Gen. Paul Nakasone, who heads the U.S. Cybercommand, who appeared before PCLOB in a public event to discuss many foreign threats that he said had been detected and neutralized because of Section 702. LeBlanc’s statement adds some missing context to the general’s characterization on the domestic uses of this program. It seems on the domestic side to be all violation and no value, at least from a national security standpoint. At that same January event, Cindy Cohn of the Electronic Frontier Foundation: “I think we have to be honest at this point that the U.S. has de facto created a national security exception to the U.S. Constitution.” LeBlanc’s statement on Monday seems to add – “and for what?” “Run Like a Corrupt Government" Politico on Monday released the results of an investigation into activities of “virtually unknown” domestic intelligence activities within the Department of Homeland Security.

In documents obtained by Politico, one DHS employee said that the DHS Office of Intelligence and Analysis is “shady” and is “run like a corrupt government.” Some employees were so worried about the thin legal justification for their domestic spying activities that they wanted their employer to cover them with legal liability insurance. A survey by I&A Field Operations Division, now called the Office of Regional Intelligence, found that one-half of respondents said they had alerted managers that they were concerned their activity was inappropriate or illegal. Many felt senior leadership had an “inability to resist political pressure.” “In recent years, the office’s political leadership – Democrat and Republican – has pushed I&A to take a more and more expansive view of its mandate, putting officers in the position of surveilling Americans’ views and associations protected by the U.S. Constitution,” said Spencer Reynolds, counsel at the Brennan Center for Justice at New York University Law School, himself a former DHS intelligence and counterintelligence attorney. “There’s a tendency to use the office’s power to paint political opponents – be they left-wing demonstrators or QAnon truthers – as extremists and dangerous. This has had a disastrous impact on morale – most people don’t join the Intelligence Community to monitor their fellow Americans’ political, religious, and social beliefs.” He added that I&A’s leadership has “sidelined” oversight offices, leaving employees little recourse but to comply. I&A intelligence agents can also seek voluntary interviews with incarcerated people, including people awaiting trial. They must state that the interview is voluntary and that they have no sway over judges either in criminal or immigration cases. But they also can seek these interviews with inmates and those awaiting trial without alerting their attorneys. In many cases, the interviewees’ lawyers aren’t aware that the conversations are happening. “While this questioning is purportedly voluntary, DHS’s policy ignores the coercive environment these individuals are held in,” said Patrick Toomey of the American Civil Liberties Union National Security Project. “It fails to ensure that individuals have a lawyer present, and it does nothing to prevent the government from using a person’s word against them in court.” The civil liberties community owes a big debt of gratitude to Politico for this in-depth piece. Domestic intelligence gathering is pervasive and often without guardrails. Congress has much to investigate. Will the Intelligence Community Remove Warrantless Surveillance of Americans from Section 702?3/2/2023

Letter from Attorney General Garland and Director Haines Attorney General Merrick Garland and Director of National Intelligence Avril Haines wrote to the leaders of Congress to tell them that they must reauthorize Section 702 of the Foreign Intelligence Surveillance Act – “promptly” – so terrorists and foreign actors won’t attack us.

And to be fair, there are terrorists and state actors who wish to reach into our homeland and do us harm. The attorney general and director inform us that Section 702 data has been used to protect “against national security threats” from China and North Korea. It stopped components for weapons of mass destruction from reaching foreign actors, and disrupted terrorist and cyber threats. To which we say, thank you for your service! Yet, we wish that were all. This letter ignores important failings of Section 702. They write, “Because Section 702 can only be used to target individual non-U.S. persons located outside the United States, it may not be directed against Americans at home or abroad.” This is not, however, what happens. It is what is supposed to happen because Congress explicitly crafted Section 702 to protect us against the kinds of national security threats named in the letter from Garland and Haines. It forbids domestic spying and commands agencies to observe the Fourth Amendment. The secret Foreign Intelligence Surveillance Court revealed in 2020 that the FBI has used Section 702 data in cases that include “health-care fraud,” “public corruption and bribery,” and more serious domestic concerns like extremists and “violent gangs.” The court observed: “None of these queries was related to national security.” Nor did Garland and Haines address the FBI’s warrantless 3.4 million backdoor searches of Americans’ data in 2021 – a figure published by the agency itself, which has also been revealed as “murky,” suggesting that part of FISA reauthorization should require the FBI to get its own data in order. It is this kind of behavior that prompted the FISA Court to issue several opinions finding “widespread violations” by the FBI in its use of Americans’ communications in backdoor searches. One of them was an unnamed Member of Congress. The failure of the government to report systemic non-compliance prompted the secret court to denounce the National Security Agency for an institutional “lack of candor.” As we’ve noted elsewhere, that’s a choice phrase the FBI uses when it terminates an agent for lying to the Bureau. The letter does promise that the intelligence community and Department of Justice are committed “to engaging with Congress on potential improvements to the authority that fully preserve its efficacy,” but no substantive reforms are named. Many civil liberties groups see this letter as a very discouraging opening bid given the massive extent of government surveillance of Americans. The danger for the intelligence community is that if they play a game of chicken with Congress, they might well lose with the expiration of this authority. On the other hand, if they are serious – and are willing to accept an ironclad prohibition of the warrantless surveillance of Americans from Section 702 data – the law should have an excellent chance of being reauthorized before it expires in December. We can protect both national security and the rights of Americans from warrantless government surveillance. We urge General Garland and Director Haines to listen and be willing to live up to the guarantees of the Fourth Amendment. Did You Know Your Jeweler and Car Dealer Report You to the Feds? Federal intelligence and law enforcement agencies and their champions on Capitol Hill are bringing together – often with the best of intentions – all the elements needed to create a Chinese-style comprehensive surveillance state here at home. Rep. John Rose (R-TN), a member of the House Committee on Financial Services, is aiming to curb the government’s thirst for surveillance in at least one domain – our personal financial information.

First, some background. Last year, PPSA reported on the Transparency and Accountability in Service Providers Act that would have enlisted a host of employees of non-bank financial institutions to spy on their customers and report any activity deemed suspicious to the Federal Crimes Enforcement Network (FinCEN). Among the 7.2 million government informants deputized to spy would have been “financial gatekeepers” ranging from attorneys to trustees, those who wire money, financial services advisors, financial managers, and most of the financial services industry. Thankfully, that proposal did not make it into law in the last Congress. But existing financial regulations in the current anti-money laundering regime are extensive. Banks continuously scrutinize customers’ accounts and send voluminous reports to FinCEN, recording and reporting your financial life to the government without having to bother with warrants or other niceties required by the Fourth Amendment. Other “financial institutions” are similarly required to make such reports, including merchants you might never have imagined would be in this category – ranging from pawn shops, to car dealers, to jewelers, to broker-dealers. At a CATO event Monday, Rep. Rose, himself a former banker with deep experience in trying to comply with the law, spoke of the impossibility of financial institutions in deciphering exactly what regulations require of them in compiling “suspicious activity reports” and “currency transactions reports.” “Banks have no idea what to report,” Rep. Rose said. As a result, reporting institutions, fearing that an omitted line of data might later be used against them by law enforcement, tend to throw as much of our data – aka, our privacy – as they can at the feds. “They want as much as they can get,” Rep. Rose said. He added that these regulations largely explain the rising cost of opening a checking account. Similar federal requirements are heaped on ATM systems, raising costs, and creating fewer outlets for low-income and the unbanked or underbanked. Aaron Kline of the Brookings Institution noted this system’s hunger for ever-more data isn’t even a good one for law enforcement. “They are looking for a needle in a haystack, but they keep pouring in more hay.” Kline suggested that policymakers from across the spectrum have a common interest in reforming this flatly unconstitutional financial surveillance system. Those on the left want to make it easier and cheaper for the unbanked and low-income bank customers to get access to financial services. Those on the right are concerned that these programs violate civil liberties. And even law enforcement should be interested in reform so it can refine its searches for patterns typical of terrorists, human traffickers, and drug dealers, instead of drinking from a digital firehose. Perhaps such a coalition will support Rep. Rose’s Bank Privacy Reform Act, which would reform the Bank Secrecy Act of 1970 by repealing requirements for financial institutions to report their customers’ financial information and transaction histories to government agencies without a warrant. Under Rep. Rose’s bill, financial institutions would still maintain customer records for government to examine… but only after agents present them with a probable cause warrant, as the Founders intended. PPSA recently reported that the Bureau of Alcohol, Tobacco, Firearms and Explosives (ATF), in a response to our Freedom of Information Act (FOIA) request, downplayed its use of stingrays, as cell-site simulators are commonly called. Yet one agency document revealed that stingrays are “used on almost a daily basis in the field.” This was a critical insight into real-world practice. These cell-site simulators impersonate cell towers to track mobile device users. Stingray technology allows government agencies to collect huge volumes of personal information from many cellphones within a geofenced area. We now have more to report with newly-released documents that, as before, include material for internal training of ATF agents. One of the most interesting findings is not what we can see, but what we can’t see – the parts of documents ATF takes pains to hide. The black ink covers a slide about the parts of the U.S. radio spectrum. Since this is a response to a FOIA request about stingrays, it is likely that the spectrum discussed concerns the frequencies telecom providers use for their cell towers. What appears to be a quotidian training course for agents on electronic communications has the title of the course redacted. If that is so, was there something revealing about the course title that we are not allowed to see? Could it be “Stingrays for Dummies?” The redactions also completely cover eleven pages about pre-mission planning. Do these pages reveal how ATF manages its legal obligations before using stingrays? This course presentation ends somewhat tastelessly, a slide with a picture of a compromised cell-tower disguised as a palm tree. In the release of another tranche of ATF documents, forty-five pages are blacked out. It appears from the preceding email chain that these pages included subpoenas for a warrant executed with the New York Police Department. The document assigns any one of a pool of agents to “swear out” a premade affidavit to support the subpoena. The ATF reveals it uses stingrays on aircraft, which requires a high level of administrative approval. It seems, however, from an ATF PowerPoint presentation that this is a policy change, which suggests that prior approvals were lax. Was this a reaction to the 2015 Department of Justice’s policy on cell-site simulators? If aerial surveillance now requires a search warrant, what was previously required – and how was such surveillance used? Was it used against whole groups of protestors? Finally, the documents reveal that the ATF has had cell-site simulators in use in field divisions in major cities, including Chicago, Denver, Detroit, Houston, Kansas City, Los Angeles, Phoenix, and Tampa, as well as other cities. PPSA will report more on ATF’s ongoing document dumps as they come in. By The Way... Here's How ATF Glosses Over Its Location TrackingThe training manual of the Bureau of Alcohol, Tobacco, Firearms and Explosives states that cell-site simulators “do not function as a GPS locator, as they do not obtain or download any location information from the device or its applications.” This claim is disingenuous. It is true that exact latitude and longitude data are not taken. But by tricking a target’s phone into connecting and sending strength of signal data to a cell tower, the cell-site simulator allows the ATF to locate the cellphone user to within a very small area. If a target uses multiple cell-site simulators, agents can deduce his or her movements throughout the day.

Below is an example from a Drug Enforcement Agency document that shows how this technology can be used to locate a target (seen within the black cone) in a small area. In response to a Freedom of Information Act request filed by PPSA, the Bureau of Alcohol, Tobacco, Firearms and Explosives (ATF) responded with a batch of documents, including internal training material. In those documents, the ATF confirmed that it uses cell site simulators, commonly known as “stingrays,” to track Americans.

Stingrays impersonate cell towers to track mobile device users. These devices give the government the ability to conduct sweeping dragnets of the metadata, location, text messages, and other data stored by the cell phones of people within a geofenced area. Through stingrays, the government can obtain a disturbing amount of information. The ATF has gone to great lengths to obfuscate their usage of stingrays, despite one official document claiming stingrays are “used on almost a daily basis in the field.” The ATF stressed that stingrays are not precise location trackers like GPS, despite the plethora of information stingrays can still provide. Answers to questions from the Senate Appropriations Committee about the ATF’s usage of stingrays and license plate reader technology are entirely blacked out in the ATF documents we received. An ATF policy conceals the use of these devices from their targets, even when relevant to their legal defense. Example: When an ATF agent interviewed by a defense attorney revealed the use of the equipment, a large group email was sent out saying: "This was obviously a mistake and is being handled." The information released by the ATF confirms the agency is indeed utilizing stingray technology. Although the agency attempted to minimize usage the usage of stingrays, it is clear they are being widely used against Americans. PPSA will continue to track stingray usage and report forthcoming responses to pending Freedom of Information Act requests with federal agencies. In the course of the 2020 presidential election, the FBI approached and pressured Twitter to grant the agency access to private user data. This information has come to light as part of the “Twitter Files” expose, a sprawling series of reports based on internal documents made available through Elon Musk’s ownership of the site.

In January of 2020, Yoel Roth, former Twitter Trust and Safety head, was pressured by the FBI to provide access to data ordinarily obtained through a search warrant. Roth had been previously approached by the FBI’s national security cyber wing in 2019 and had been asked to revise Twitter’s terms of service to grant access to the site’s data feed to a company contracted by the Bureau. Roth drafted a response to the FBI, reiterating the site’s “long-standing policy prohibiting the use of our data products and APIs for surveillance and intelligence-gathering purposes, which we would not deviate from.” While Twitter would continue to be a partner to the government to combat shared threats, the company reiterated that the government must continue to “request information about Twitter users or their content […] in accordance with [the] valid legal process.” Twitter and other social media platforms have been aware of increasing FBI encroachment for some time. In January of 2020, Carlos Monje Jr., former Director of Public Policy and Philanthropy at Twitter, wrote to Roth, saying “we have seen a sustained (if uncoordinated) effort by the IC [intelligence community] to push us to share more info & change our API policies. They are probing & pushing everywhere they can (including by whispering to congressional staff)...” Accordingly, from January 2020 and November 2022, over 150 emails were sent between the FBI and Roth. Not only is the FBI trying to gain a backdoor into Twitter’s data stream, in several cases, the Bureau has pressured Twitter to pre-emptively censor content, opinions, and people. For example, the agency allegedly demanded that Twitter tackle election misinformation by flagging specific accounts. The FBI pointed to six accounts, four of which were ultimately terminated. One of those profiles was a notorious satire account, which calls into question the FBI’s ability to spot fakes. In November, the FBI handed Twitter a list of an additional twenty-five accounts that “may warrant additional action.” And, of course, there is the story about Hunter Biden’s laptop. According to the “Twitter Files,” the FBI pressured Twitter to censor the story as a possible Russian misinformation attack. This was a major story mere days before a presidential election, which the FBI worked to suppress. Expanding efforts by the FBI to gain a backdoor into private social media information is a grave concern, as is the Bureau’s efforts to suppress information. That the agency continues to pursue such options even after being advised that those options violate normal legal procedures is yet another example of how the agency has become increasingly politicized, to the extent that a House Judiciary Committee report described the Bureau’s hierarchy as “rotted at its core” and embracing a “systemic culture of unaccountability.” This is a serious cause for concern given the widespread effects that the agency’s use and potential misuse of its authorities can have on the country as a whole. The Project for Privacy and Surveillance Accountability today released a response from the Office of the Director of National Intelligence to our Freedom of Information Act (FOIA) request seeking agency records regarding its treatment of classified documents.

Our request, filed in September 2020, seeks agency records concerning Executive Order 13526, which includes provisions for classifying, managing, and declassifying documents. We recently received a response that included an internal memo to the ODNI’s Inspector General requesting an investigation for… something. Almost all the rest of the document is blacked out. Under a section of the memo entitled “Summary,” we get redaction in the form of a sea of black. Under “Background,” we get only more sea of black. Under “Compartmentalization,” we get some sea of black, but can tell that the issue has something to do with Controlled Access Programs, an intelligence community way of boxing off need-to-know information regarding sources, methods, and activities. Under “Embarrassment,” we find that the offending program “might violate rules that prevent classification meant to avoid embarrassment because it targets exactly those areas where intelligence runs counter to policy.” Or it might not. Under “Possible Problems with the Execution,” we are told “the program might violate regulations or constitute mismanagement.” Then another sea of black. Under “Oversight,” we are told that “ODNI’s narrow communication might violate laws and regulations requiring Congressional oversight of intelligence activities.” The program’s “broad scope and major impact on a leading national security concern suggests it meets the threshold for notification,” presumably to Congress. So, to sum it up, something someone did in the intelligence community went off the rails. It might have been classified to prevent embarrassment. It might violate regulations or constitute mismanagement serious enough to merit investigation by the ODNI Inspector General. It has a broad scope and major impact on a national security concern, details of which are hidden by a government as ready as a squid to squirt black ink. The government’s response brings to mind the title of Joseph Heller’s second novel, Something Happened. For certain, we can now say for our legal efforts that something happened in our government, and it wasn’t good. The lesson here is that it defeats the purpose of the Freedom of Information Act when agencies pretend that a heavily redacted document constitutes a response. More to the point, when many Members of Congress seek information from the agencies, they often are confronted with similar obfuscation tactics. Or, as one character says to another in Squid Game, “you won’t get caught if you hide behind someone.” “Just One Sign of a Much Larger Privacy Crisis" In February, we quoted CATO Institute senior fellow Julian Sanchez that the evidence presented by special counsel John Durham against lawyer Michael Sussman shows an interesting trail that leads from academic researchers, to private cybersecurity companies and security experts, to government snoopers.

Sanchez said: “A question worth asking is: Who has access to large pools of telecommunications metadata, such as DNS records, and under what circumstances can those be shared with the government?” Sanchez’s prescient questions received partial answers today from Sen. Ron Wyden. The Oregon senator released a letter he sent to the Federal Trade Commission asking the agency to investigate Neustar, a company that links Domain Name System (DNS) services of websites to specific IP addresses and the people who use them. Such companies, Sen. Wyden wrote, “receive extremely sensitive information from their users, which many Americans would want to remain private from third parties, including government agencies acting without a court order.” Some websites cited by the senator that consumers may visit but would not want known are the National Suicide Prevention Hotline, the National Domestic Violence Lifeline, and the Abortion Finder service. Sen. Wyden wrote that Neustar, under former executive Rodney Joffe, sold data for millions of dollars to Georgia Tech, but not for purely academic research. Emails obtained by Sen. Wyden purportedly show that the FBI and DOJ “asked the researchers to run specific queries and that the researchers wrote affidavits and reports for the government describing their findings.” Because Neustar obtained data from an acquired company – and that company explicitly promised to never sell users data to third-parties – Neustar violated that promise. Sen. Wyden says it is FTC policy that privacy promises to consumers must be honored when a company and its data change ownership. “Senator Wyden provides sufficient reason for the FTC to open an investigation,” said Gene Schaerr, general counsel of Project for Privacy & Surveillance Accountability (PPSA). “But there is more reason for the judiciary committees of both houses of Congress to hold in-depth hearings. There are abundant signs that this story is just one example of a much bigger privacy crisis.” Schaerr noted that intelligence and law enforcement agencies, from the Internal Revenue Service to the Drug Enforcement Administration, Customs and Border Protection, as well as the FBI, assert they can lawfully avoid the constitutional requirement for probable cause warrants by simply buying Americans’ personal information from commercial data brokers. “Data from apps most Americans routinely use are open to warrantless examination by the government,” Schaerr said. “The Founders did not write the warrant requirement of the Fourth Amendment with a sub-clause, ‘unless you open your wallet.’ These practices are explicitly against the spirit and letter of the U.S. Constitution. Americans deserve to know how many agencies are buying data, how many companies are selling it, and what is being done with it.” The Internet of Things (IoT), long promised, is already here. It is happening incrementally – from coffee makers, to cars, to refrigerators – that send voluminous quantities of our personal information to the cloud. As the IoT knits together, consumers need to know how our information is being collected.

Most people are unaware that refrigerators, washers, dryers, and dishwashers now often have audio and video recording components. By 2026, over 84 million households will have smart devices, each one a node within a seamless web of personal information. But how will this storehouse of personal data be regulated? Looking ahead to the growing hazards of the near-future, Sen. Maria Cantwell (D-WA), and Sen. Ted Cruz (R-TX), introduced the Informing Consumers about Smart Devices Act. This legislation would require the Federal Trade Commission to create reasonable disclosure guidelines for products that have video or audio recordings. “Most consumers expect their refrigerators to keep the milk cold, not record their most personal and private family discussions,” Sen. Cantwell said. We would make the larger point that Americans shouldn’t have to think about what they say or do in the presence of their appliances. (Although it would be nice to have a smart refrigerator that slaps our hand after 9 p.m.) The greater issue is that all the data that apps, and perhaps now our smart appliances, extract from us can be accessed by government agencies without any need to obey the constitutional requirement to obtain a warrant. All an agency needs to do to obtain our personal information is to purchase it from a private data broker. That’s all the more reason to pass the Fourth Amendment Is Not For Sale Act. In Christopher Nolan’s magnificent movie The Dark Knight, Bruce Wayne presents his chief scientist, Lucius Fox, with a sonar technology that transforms millions of cellphones into microphones and cameras. Fox surveys a bank of screens showing the private actions of people around the city.

The character, played by Morgan Freeman, takes it all in and then declares the surveillance to be “beautiful, unethical, dangerous … This is wrong.” What was fiction in 2008 became reality a few years later with Pegasus: zero-click spyware that allows hackers to infiltrate cellphones and turn them into comprehensive spying devices, no sonar needed. A victim need not succumb to phishing. Possessing a cellphone is enough for the victim to be tracked and recorded by sound and video, as well as to expose the victim’s location history, texts, emails, images, and other communications. This spyware created by the Israeli NSO Group might have originally been developed, as most of these surveillance technologies are, to catch terrorists. It has since been used by various dictatorships and cartels to hunt down dissidents, activists, and journalists, sometimes marking them for death – as it did in the cases of Jamal Khashoggi and Mexican journalist Cecilio Pineda Birto. PPSA reported earlier this year that the FBI had purchased a license for Pegasus but has been keeping it locked away in a secure office in New Jersey. FBI Director Christopher Wray has assured Congress that the FBI was keeping the technology for research purposes. Now, Mark Mazzetti and Ronen Bergman of The New York Times have updated their deep dive into FBI documents and court records about Pegasus produced by a Freedom of Information Act request. PPSA waded through these now-declassified documents, half of each page blanked out by censors. What we could see was alarming. One document, dated Dec. 4, 2018, pledged that the U.S. government would not sell, deliver, or transfer Pegasus without written approval from the Israeli government. The letter certified that “the sole purpose of end use is for the collection of data from mobile devices for the prevention and investigation of crimes and terrorism, in compliance with privacy and national security laws.” Since many in the national security arena and their allies assert that executive order EO 12333 gives intelligence agencies unlimited authority, the restraining influence of privacy and national security laws is questionable. And true to form, the FBI documents show that the agency did, in fact, give serious consideration to using Pegasus for U.S. criminal cases.

Why the turnaround? It was at time that a critical mass of Pegasus stories – with no lack of murders, imprisonments, and political scandals – emerged in the world press. That is surely why the FBI left this hot potato in the microwave. One wonders, however, what to make of the attempt of a U.S. military contractor, L3Harris, to purchase NSO earlier this year? If the FBI was out of the picture, was this aborted acquisition an effort by the CIA to lock down NSO and its spyware menagerie? And if the CIA has found some other route to possess this technology – and to be frank, they’d be guilty of malfeasance if they didn’t – is the agency staying within its no-domestic-spying guardrails in deploying this invasive technology? Recent revelations of bulk surveillance by the CIA does not inspire confidence. Nor can we discount what the FBI might do in the future. Despite the FBI’s decision to avoid using the technology, Mazzetti and Bergman report that an FBI legal brief filed in October stated: “Just because the FBI ultimately decided not to deploy the tool in support of criminal investigations does not mean it would not test, evaluate and potentially deploy other similar tools for gaining access to encrypted communications used by criminals.” No doubt, targeted use of such technologies would catch many fentanyl dealers, human traffickers, and spies. But as Lucius Fox asks, “at what cost?” Thomas Germain on Gizmodo has an alarming piece on research from two app developers, Tommy Mysk and Talal Haj Bakry, who claim that despite Apple’s explicit promise to allow you to turn off all tracking, Apple still tracks you.

Apple advertises its ability to turn off iPhone tracking on its privacy settings. But according to Mysk and Bakry, after turning off tracking, Apple continues to collect data from many iPhone apps, including the App Store, Apple Music, Apple TV, Books, and Stocks. They found the analytic control and other privacy settings had no discernable effect on Apple’s data collection. “Opting-out or switching the personalization options off did not reduce the amount of detailed analytics that the app was sending,” Mysk told Gizmodo. “I switched all the possible options off, namely personalized ads, personalized recommendations, and sharing usage data and analytics.” Apple still continued to track. What could be at stake for consumers? Germain wrote: “In the App Store, for example, the fact that you’re looking at apps related to mental health, addiction, sexual orientation, and religion can reveal things you might not want to be sent to corporate servers.” Germain concedes that Apple may not be using this information, but it is impossible to know since Apple has not responded. Perhaps a hint of an answer was foreshadowed by Craig Federighi, Senior Vice President of software engineering, when he recently told The Wall Street Journal that “quality advertising and product privacy could coexist.” That is far too vague to explain how Apple’s explicit privacy promises work in the real world. PPSA calls on Apple to provide a full explanation of how it treats digital privacy. “The First Amendment bars the government from deciding for us what is true or false, online or anywhere,” the ACLU recently tweeted. “Our government can’t use private pressure to get around our constitutional rights.”

The ACLU responded to a report from Ken Klippenstein and Lee Fang of The Intercept news organization that the federal government works in secret to suggest content that social media organizations should suppress. The Intercept claims that years of internal DHS memos, emails, and documents, as well as a confidential source within the FBI, reveal the extent to which the government works secretly with social media executives in squashing content. After a few days of cool appraisal of this story, we have to say we have more questions than answers. It is fair to note that The Intercept has had its share of journalistic controversies with questions raised regarding the validity of its reporting. It also appears that this report is significantly sourced on a lawsuit filed by the Missouri Attorney General, a Republican candidate for the U.S. Senate. We’ve also sounded out experts in this space who speculate that much of the content government is flagging is probably illegal content, such as Child Sexual Abuse Materials. There is also reason for the government to track and report to websites state-sponsored propaganda, malicious disinformation, or use of a platform by individuals or groups that may be planning violent acts. If Russian hackers promote a fiction about Ukrainians committing atrocities with U.S. weapons – or if a geofenced alert is posted that due to the threat of inclement weather, an election has been postponed – there is good reason for officials to act. The government is in possession of information derived from its domestic or foreign information-gathering that websites don't have, and the timely provision of that information to websites could be helpful in removing content that poses a threat to public safety, endangers children, or is otherwise inappropriate for social media sharing. It would certainly be interesting to know whether the social media companies find the government’s information-sharing efforts to be helpful or whether they feel pressured. The undeniable problem here is the secret nature of this program. Why did we have to find out about it from an investigative report? The insidious potential of this program is that we will never know when information has been suppressed, much less if the reason for the government’s concern was valid. The Intercept reports that the meeting minutes appended to Missouri Attorney General Eric Schmitt’s lawsuit includes discussions that have “ranged from the scale and scope of government intervention in online discourse to the mechanics of streamlining takedown requests for false or intentionally misleading information.” In a meeting in March, one FBI official reportedly told senior executives from Twitter and JPMorgan Chase “we need a media infrastructure that is held accountable.” Does she mean a media secretly accountable to the government? Klippenstein and Fang report a formalized process for government officials to directly flag content on Facebook or Instagram and request that it be suppressed. The Intercept included the link to Facebook’s “content request system” that visitors with law enforcement or government email addresses can access. The Intercept reports that the purpose of this program is to remove misinformation (false information spread unintentionally), disinformation (false information spread intentionally), and malinformation (factual information shared, typically out of context, with harmful intent). According to The Intercept, the department plans to target “inaccurate information” on a wide range of topics, including “the origins of the COVID-19 pandemic and the efficacy of COVID-19 vaccines, racial justice, U.S. withdrawal from Afghanistan, and the nature of U.S. support to Ukraine.” The Intercept also reports that “disinformation” is not clearly defined in these government documents. Such a secret government program may include information gathered from activities that violate the Fourth Amendment prohibition on accessing personal information without a warrant. It would also be, to amplify the spirited words of the ACLU, a Mack Truck-sized flattening of the First Amendment. One cannot ignore the potential that the government is doing more than helpfully sharing information with websites along with a suggestion that it be taken down. Is the information-sharing accompanied by pressure exerted by the government on the website? From the information now available, we simply don't know. Bottom line: if these allegations are true, the U.S. government in some cases may be secretly determining what is and what is not truth, and on that basis may be quietly working with large social media companies behind the scenes to effect the removal of content. So, the possible origin of COVID-19 in a Chinese laboratory was deemed suppressible, until U.S. intelligence agencies reversed course and determined that a man-made origin of the virus is, in fact, a possibility. And the U.S. withdrawal from Afghanistan? Is our government suppressing content that suggests that it was somehow a less-than-stellar example of American power in action? If these allegations are true, Jonathan Turley, George Washington University professor of law, is correct in calling this “censorship by surrogate.” This program, which Klippenstein and Fang report is becoming ever more central to the mission of DHS and other agencies, is not without its wins. “A 2021 report by the Election Integrity Partnership at Stanford University found that of nearly 4,800 flagged items, technology platforms took action on 35 percent – either removing, labeling, or soft-blocking speech, meaning the users were only able to view content after bypassing a warning screen.” On the other hand, the Stanford research shows that in 65 percent of the cases websites exercised independent judgment to maintain the content unmoderated notwithstanding the government's suggestion. After mulling this over for a few days, we propose the following:

There is no reason why the government cannot stand behind its finding that a given post is the product of, say, Russian or Chinese disinformation, or a call to violence, or some other explicit danger to public safety. But we need to know if the most powerful media in existence is subject to editorial influence from the secret preferences of bureaucrats and politicians. If so, this secret content moderation must end immediately or be radically overhauled. Evan Greer and Anna Bonesteel of Fight for the Future have an impassioned piece on NBC’s News Think on the effects of near-ubiquitous doorbell cameras like Amazon’s Ring, Google’s Nest, and Wyze. Reading their piece feels being the proverbial frog that finally understands it is already in boiling hot water.

Greer and Bonesteel write: “Devices like Ring and the apps associated with them are made to keep us on constant alert. They ping us with notifications, demanding our attention, and offer ‘infinite scroll’ like Facebook and Instagram, but for neighborhood crime. These devices make watching one another constantly feel acceptable, expected and even addicting.” As we’ve reported, Amazon encourages customers to share images with about 2,000 police and fire departments. Greer and Bonesteel write that Amazon is “effectively giving police an easy push-button portal to request video from Ring camera owners in exchange for officers’ help in marketing Amazon products.” They add that “Ring’s lax security practices in the past have allowed stalkers and hackers to break into people’s cameras … This dystopian vision of a private police camera on every home would have been unthinkable a generation ago.” We would add to that observation the disturbing fact that general counsels of federal law enforcement and intelligence agencies assert a right to purchase Americans’ personal data from digital data brokers without a warrant. In China, the erection of universal surveillance is the result of a deliberate campaign by the Chinese Communist Party to watch and listen in on everyone. In the United States, a similar Panopticon is being erected, piece by piece, out of desire to gain market share for doorbell cameras, lawn furniture, and home fitness equipment sold online. But the destination is beginning to look the same. Carolyn Iodice of Clause 40 Foundation has penned a brilliant analysis and history of the Foreign Intelligence Surveillance Act (FISA), a worldly examination of how that law operates in practice. Briefly put, FISA is a statute that is often treated by the government not as law that must be obeyed, but as a potpourri to mask the stench of illicit surveillance.

Iodice begins her paper with a report issued earlier this year by Sens. Ron Wyden and Martin Heinrich that the CIA has secretly gathered Americans’ records as part of a warrantless bulk data collection program. This program, the senators noted, works “entirely outside the statutory framework that Congress and the public believe govern this collection, and without any of the judicial, congressional, or even executive branch oversight that comes with FISA collection.” To enter the world of FISA is to enter Alice’s Wonderland where agency general counsels talk backwards and agency chiefs assert six impossible things before breakfast. Iodice makes a bold statement in the beginning that the rest of her paper validates: “In the context of FISA, the government has succeeded in violating the law by using implausible interpretations of statutory language and even by evading the statute entirely. Of course, it’s not uncommon for the executive branch to overstep its statutory authorities, but if FISA is understood to be legally binding on the government’s surveillance activities in the same way that, for instance, the EPA’s authority to set national air quality standards is granted and defined by the Clean Air Act, then the flagrancy and frequency of the government’s unlawful surveillance activities is puzzling. If FISA—a law duly passed by Congress and signed by the president—sets legal rules for surveillance programs, why does the government keep flouting them?” Unlike with the Clean Air Act, she explains, with FISA there is no agreement where the lines exist between legislative, judicial, and executive authority. Worse still, there is a lack of agreement how far executive authority can be extended when national security is invoked. The need for the Fourth Amendment’s requirement for a probable cause warrant in criminal cases is clear, even if that principle is often now observed in the breach. But the Supreme Court has not supplied much guidance on how the Fourth Amendment applies to operations within the United States that are for intelligence purposes. The rest of Iodice’s paper tracks the steady weakening of FISA in the post-9/11 world. This paper is a timely primer for what promises to be a key surveillance debate: By the end of next year, FISA’s Section 702 must be reauthorized or expire. Section 702 grants the intelligence community the authority to surveil foreign intelligence targets. While Fourth Amendment protections prevent Americans from being targeted, the law allows the communications of Americans to get swept up in “incidental” collection. This loophole has been extended to whatever width or shape the government needs to do whatever it wants. Iodice concludes that if Congress reasserted its authority, or the courts resolved the Fourth Amendment and separation-of-powers issues in FISA, then FISA would operate more like a statute should. In the meantime, civil liberties champions in Congress need to be deadly serious about holding up reauthorization of Section 702 if demands for serious FISA reforms are not met. Last year, we reported on Apple’s plan to open a digital backdoor on CSAM, or Child Sexual Abuse Material. We reported that a content-flagging system was not just invasive of people’s privacy, but it could open a backdoor for China to use the technology to persecute dissidents and spy on Americans.

Throughout the privacy discussion, the European Union has generally led the world in pushing for higher standards for digital privacy, often challenging the United States to follow its lead. Now, in the necessary drive to detect and prosecute those who abuse children, the EU Commission is driving a proposal that could result in the scanning of every private message, photo, and video to detect CSAM. It is also proposing using software to seek out adults engaged in “grooming” children to be victimized. Every decent person agrees that we need to be aggressive in rooting out and prosecuting adults who exploit children. What could go wrong with the EU proposal? Joe Mullin of the Electronic Frontier Foundation reports that the Commission “wants to open the intimate data of our digital lives up to review by government-approved scanning software, and then checked against databases that maintain image of child abuse.” Private digital conversations, even for Americans, will no longer be truly private. Problem: The detection software produces far more false positives than catches. Mullin writes: “Once the EU votes to start running the software on billions more messages, it will lead to millions of more false accusations. These false accusations get forwarded on to law enforcement agencies. At best, they’re wasteful; they also have potential to produce real-world suffering … That is why we shouldn’t waste efforts on actions that are ineffectual and even harmful.” We would add that PPSA is concerned that technology developed for an admirable purpose is technology that will soon be used for any purpose. “Why Elon Musk’s Idea of ‘Free Speech’ Will Help Ruin America,” reads a headline in the liberal The New Republic. Bottom line – the sale of Twitter to Elon Musk “means that lies and disinformation will overwhelm the truth and the fascists will take over.” “Stop the Twitterverse – I Want to Get Off,” writes Debra Saunders in the conservative American Spectator a few weeks before Elon Musk’s acquisition of Twitter became inevitable. From left and right, cynicism is the dominant reaction to the potential of Twitter under Elon Musk’s direction. The left hates Twitter because it can be abused by noxious personalities with extreme politics. The right hates Twitter because of a perception among conservatives that Twitter takes out the magnifying glass only when evaluating conservative speech. Both sides have become so used to distortion and the failure of public enterprises and personalities that they have come to welcome it. We’ve even started to root for failure. There is an emotional comfort to always assuming the worst will happen – you will never be disappointed. E.K. Hornbeck, the journalist character in Inherit the Wind, captured the mentality of our times in a play written by Jerome Lawrence half-a-century before the emergence of social media: “Cynical? That's my fascination. Social media has elevated Hornbeckism and taught us not just expect the worst, but to celebrate it. We should pause, then, to take note that on the day Elon Musk visited the headquarters of Twitter as he assumes ownership, the billionaire released a surprisingly sweet note to advertisers about the direction the platform will take.

Musk wrote that he bought Twitter “because it is important to the future of civilization to have a common digital town square, where a wide range of beliefs can be debated in a healthy manner, without resorting to violence. There is currently great danger that social media will splinter into far-right wing and far-left wing echo chambers that generate more hate and divide our society.” He wrote that the “relentless pursuit of clicks” of traditional and social media fuels caters to polarized extremes. Musk admits that failure is real possibility for him and that he must allow some degree of content moderation to keep Twitter from becoming a “free-for-all-hellscape.” Musk and his team face many granular decisions between statements that are edgy and even offensive to many, and those that are over the line. That line will probably waver back and forth as Twitter experiments with a broader array of speech and speakers. Security will also need to be addressed. A fired former senior executive of Twitter, Peiter “Mudge” Zatko, testified before the Senate Judiciary Committee that there are “no locks on the doors” at Twitter when it comes to securing users’ data. Twitter, he said, had been infiltrated by foreign spies, including actors on behalf of the People’s Republic of China, seeking Americans’ personal data. It will be up to Musk to assess and if necessary correct security flaws. He will lead a team that must be capable of executing operations while bringing a more open-minded ethos to the Twitterverse. We can be certain that there will be mistakes, embarrassments, policies made and revoked. But Elon Musk’s rockets exploded on the launchpad before he got SpaceX right. Maybe the same will happen this time. We should all hope so. As Twitter evolves, stumbles, evolves some more, we should remain calm and continue to cheer for the platform’s success. There’s nothing quite like it. And if Twitter fails because we cannot as a nation manage a dialogue, then we will all fail as well. Chris Gilliard in Atlantic describes a day of “luxury surveillance” – what an affluent consumer experiences by being willing to have his heartbeat, sleep, fitness, mood, digital orders, and daily queries continuously tracked.

This is not, Gilliard writes, a dystopian vision. In Gilliard’s “day in the life” description all the services and devices are current Amazon products endowed with what the company calls “ambient surveillance.” They could just as easily be Apple Watches, Apple, Samsung or Google smartphones, or Google Nest devices. What could be wrong, then, with consumers by the millions opting into ambient surveillance? Gilliard sees a lot wrong. He offers a cautionary note from personal experience: “Growing up in Detroit under the specter of the police unit STRESS – an acronym for ‘Stop the Robberies, Enjoy Safe Streets’ – armed me with a very specific perspective on surveillance and how it is deployed against Black communities. A key tactic of the unit was the deployment of the surveillance in the city’s ‘high crime’ areas. In two and a half years of operation during the 1970s, the unit killed 22 people, 21 of whom were Black.” Now, Gilliard writes, “think of facial recognition falsely incriminating Black men, or the Los Angeles Police Department requesting Ring-doorbell footage of Black Lives Matter protests.” We would add that one problem with luxury surveillance is that all this data being compiled on us can be easily acquired by local law enforcement, as well as by federal agencies ranging from the Department of Defense to the Department of Homeland Security. It is one thing to be surveilled in order to have an ad slipped into your social media feed. It is something else to find a SWAT team knocking down your door at dawn. Luxury surveillance is a boon for consumers until it isn’t. All the more reason why Americans should support the Fourth Amendment Is Not for Sale Act, which would at least constrain the ability of the government to get around the Constitution by buying our most personal information. Charles C.W. Cooke in National Review recently penned a provocative essay that says what some conservative Republicans and progressive Democrats are thinking – dismantle the FBI!

Cooke makes a case that ever since J. Edgar Hoover took over the Bureau of Investigation, the FBI has been “a violent, expansionist, self-aggrandizing, and careless outfit that sits awkwardly within the American constitutional order.” Cooke presents the FBI’s parade of horribles: J. Edgar Hoover presented President Truman with a plan to suspend habeas corpus and put 12,000 Americans into military facilities and prisons at the outbreak of the Korean War. The FBI under Hoover’s leadership tried to convince Dr. Martin Luther King Jr. to commit suicide. It helped presidents destroy their enemies and used blackmail to intimidate the FBI’s critics (paranoia fueled from the likely fact that Hoover himself was eminently blackmailable). It doubled down on a macho confrontation with David Koresh, clearly a psychopath, leading to the deaths of 75 people, 17 of them children. We would add to that list a bureau headquarters that actively blocked investigations from the field that could have stopped 9/11. Many have more recent reasons to suspect the FBI is rigging its investigations. In recent years, an FBI lawyer was caught and convicted of presenting altered evidence and lying to the Foreign Intelligence Surveillance Court in an effort to hide Carter Page’s service to the CIA. The FBI today has excellent justification to pursue those who invaded and trashed the U.S. Capitol on Jan. 6, and perhaps reason to pursue an investigation of former President Donald Trump’s handling of classified material – but these investigations will always be suspect to millions of Americans because of the FBI’s involvement in partisan forgery and in peddling the Steele Report, which the FBI knew at the time was unreliable. On the other side of the ideological fence, the FBI has employed invasive surveillance techniques to spy on Americans who exercised their First Amendment rights by protesting police misconduct. So Cooke’s cry to dismantle the FBI, once a fringe opinion, is sure to have resonance with many on the right and left. As outrageous as the FBI has been at times, however, we counsel critics remember its value in keeping us safe from terrorists, human traffickers, cyber-criminals and foreign intelligence agents from Russia and China. And make no mistake, Russian and Chinese agents and their subordinated or blackmailed helpers are in America in force and doing great harm to our country. Fighting these threats are some of the most capable and patriotic men and women we’ve ever met. So what to do? Cooke offers a list of potential reforms he had toyed with before deciding to argue for the wholesale dismantlement of the FBI. Cooke’s list is well thought-out and worthy of a second look and of being quoted at length:

We endorse Cooke’s strong list of reforms, to which we propose two of our own.

In looking at the history of the FBI, strong leadership has often come from its field offices. But leadership in the top tiers of the J. Edgar Hoover Building has shown itself to be entrenched with Washington power-seeking and socially enmeshed with media and political circles. If one wants to bring about change, perhaps a good place to start would be to divert resources taken up by HQ and spread them out of Washington and into the field offices. Andrew McCarthy, in a reply to Cooke in National Review, promotes the idea of separating the intelligence function of the FBI from its law enforcement function. This would return the FBI to being an agency dedicated solely to law enforcement. It would create an American version of the UK’s MI-5 for the purpose of counterintelligence. Like MI-5, the new agency would have no police powers (though the creation of a 19th intelligence agency in the U.S. government would undoubtedly bring fresh concerns about surveillance and privacy). Another needed change would be to instill into the culture of headquarters something similar to that of the senior ranks of the U.S. military, which eschews any sign of partisanship. Many generals and admirals will not discuss their political views. Some make it a point of pride not to vote. This may be asking too much of civilian officials, but if an agent is assigned to a team that deals with political crimes, with First Amendment implications that resonate nationally, being an outspoken partisan should be reason enough for an immediate transfer to some other important line of duty. |

Categories

All

|

RSS Feed

RSS Feed